The Turing Test, formulated by the brilliant mathematician Alan Turing, has long been a cornerstone in the discussion of artificial intelligence. It challenges the boundaries of what it means for a machine to think and engage with humans in a seemingly intelligent manner. As we dive into its historical context, philosophical implications, and contemporary relevance, we uncover the layers that shape our understanding of intelligence and consciousness.

This exploration not only highlights Turing’s visionary contributions but also the subsequent evolution of AI technologies influenced by his foundational ideas. From early programs that flirted with the idea of machine intelligence to modern AI applications that push these boundaries further, the Turing Test remains a pivotal reference point in the ongoing conversation about what it means for machines to ‘think.’

The historical context of the Turing Test in artificial intelligence development

The Turing Test, conceived by British mathematician and logician Alan Turing in 1950, represents a significant milestone in the development of artificial intelligence (AI). Turing introduced this test in his seminal paper “Computing Machinery and Intelligence,” where he posed the fundamental question, “Can machines think?” The Turing Test was designed to evaluate a machine’s ability to exhibit intelligent behavior indistinguishable from that of a human. This notion sparked widespread interest and debate around the capabilities of machines, laying the groundwork for future explorations into AI.

The origins of the Turing Test stem from Turing’s desire to provide a clear criterion for machine intelligence. In an era where computers were still in their infancy, Turing envisioned a world where machines could simulate human conversation. His insights into machine learning and logical reasoning formed the backbone of what we now refer to as AI. By proposing the test, Turing challenged researchers to create systems that could engage in natural language processing and exhibit behaviors akin to human responses, thus pushing the boundaries of technology.

Significance of Turing’s Contributions

Turing’s contributions to the field of AI are profound and multi-faceted. His work on the Turing Test not only established a benchmark for judging machine intelligence but also encouraged further research into computational theories and cognitive science. Turing’s hypothesis laid the foundation for the development of various early AI programs that sought to mimic human-like interactions. One prominent example is the ELIZA program created by Joseph Weizenbaum in the mid-1960s. ELIZA simulated a conversation by using pattern matching and substitution methodology, effectively passing a rudimentary form of the Turing Test as it could engage users in text-based dialogue.

Another early program influenced by Turing’s ideas is the SHRDLU system, developed by Terry Winograd in the 1970s. SHRDLU was designed to understand and manipulate a block world through natural language commands, showcasing how Turing’s principles could be applied to create systems that understood context and semantics in human conversations. These early AI programs demonstrated Turing’s vision of machines capable of human-like interaction, paving the way for modern AI technologies.

Turing’s work not only emphasized the theoretical aspects of machine intelligence but also motivated practitioners to build systems that embody these principles. His legacy continues to inspire ongoing advancements in AI, ensuring that the Turing Test remains a pivotal reference point in discussions about the capabilities and limitations of artificial intelligence.

The philosophical implications of the Turing Test on consciousness and intelligence

The Turing Test, conceived by Alan Turing in 1950, serves as a pivotal benchmark in evaluating a machine’s ability to exhibit intelligent behavior indistinguishable from that of a human. The implications of this test stretch far beyond mere conversational capability, delving into the realms of consciousness and intelligence. The essence of the Turing Test raises profound questions about the nature of thought and self-awareness in machines as compared to humans.

In the context of the Turing Test, consciousness can be interpreted as the awareness and subjective experience that one possesses, while intelligence refers to the ability to acquire knowledge, solve problems, and adapt to new situations. The Turing Test essentially measures intelligence through behavior rather than internal states, leading to the philosophical debate surrounding whether a machine can truly possess consciousness or if it merely simulates understanding. Notably, the distinction between syntactic processing—manipulating symbols according to rules—and semantic understanding—comprehending meaning—is critical in this discourse. The Turing Test does not provide insights into the internal processes of machines; instead, it focuses on their outputs.

Philosophical perspectives on machine intelligence versus human intelligence

The debate on machine intelligence versus human intelligence is enriched by diverse philosophical perspectives, each offering unique insights into the capabilities of artificial intelligence. One prominent stance is that of functionalism, which posits that mental states can be defined by their functional roles, irrespective of the material they are made of. This perspective suggests that if a machine passes the Turing Test, it can be considered intelligent, as it performs functions that correspond to human intelligence.

In contrast, other philosophers like John Searle advocate for a more skeptical view, most notably through the Chinese Room argument. Searle contends that even if a machine appears to understand language (and thereby passes the Turing Test), it does not genuinely comprehend the meaning behind the words. This argument implies that true intelligence encompasses more than mere manipulation of symbols; it demands a level of understanding that machines, as currently constructed, cannot achieve.

The implications of passing the Turing Test have garnered attention from notable thinkers. For instance, philosopher Daniel Dennett argues that if a machine can convincingly engage in conversation and exhibit behaviors indistinguishable from those of a human, it should be regarded as possessing a form of intelligence. On the other hand, cognitive scientist Noam Chomsky emphasizes that language capability alone does not equate to intelligence or consciousness, pointing out that understanding the underlying context and nuances is critical for true comprehension.

In examining the philosophical implications of the Turing Test, it becomes evident that the conversation about machine intelligence versus human intelligence extends beyond the capabilities of artificial systems. It challenges our understanding of consciousness itself and raises essential questions about what it means to think, understand, and be aware. This ongoing discourse continues to shape the development of artificial intelligence and our societal perceptions of what it means to be truly intelligent.

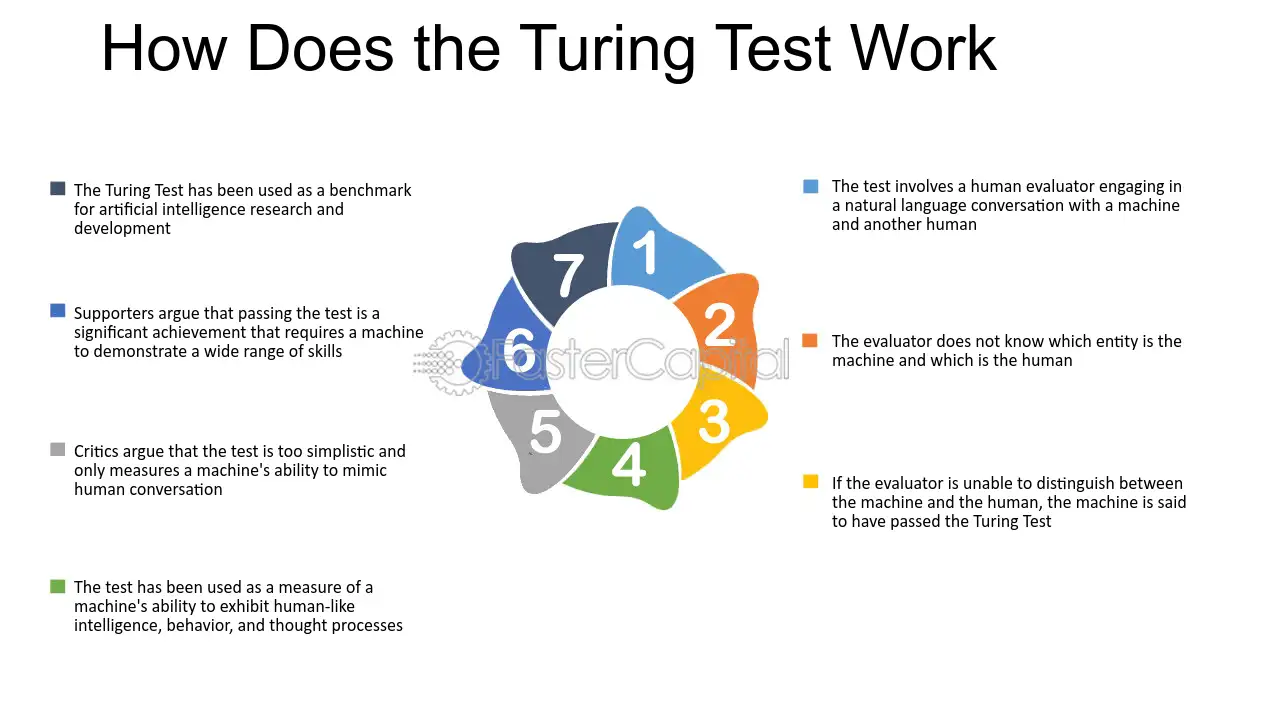

The methodology of conducting a Turing Test

The Turing Test, proposed by Alan Turing in 1950, serves as a criterion for determining whether a machine exhibits intelligent behavior equivalent to, or indistinguishable from, that of a human. The methodology for conducting a Turing Test is vital to ensure a fair and systematic approach to evaluating the machine’s capabilities. This involves several steps to set up the test and analyze the results effectively.

Setting up a Turing Test involves a structured process, where three primary roles must be defined: the human evaluator, the machine, and the human participant. The evaluator is tasked with assessing responses from both the machine and the human participant, without knowing which is which. The machine is the entity being tested for its ability to mimic human conversational patterns, while the human participant serves as a baseline for comparison. The setup typically includes a computer interface for communication, ensuring that the evaluator cannot see or hear the entities involved, thereby eliminating bias.

Roles and Responsibilities

The roles of each participant in the Turing Test are crucial for its integrity:

- Human Evaluator: This individual conducts the interrogation process, asking a series of questions designed to elicit responses that showcase the capabilities of both the machine and the human. The evaluator must remain neutral and objective, relying solely on the quality of the responses received.

- Machine: The machine’s objective is to generate responses that are as human-like as possible. This involves utilizing natural language processing algorithms and learning from extensive datasets to mimic human conversational nuances.

- Human Participant: The human acts as a control subject, providing responses that reflect genuine human thoughts and emotions. Their reactions serve as a standard for the evaluator to measure against the machine’s responses.

The evaluation criteria during the Turing Test are paramount to determining success. The evaluator considers several factors when assessing responses:

- Relevance: The degree to which the response addresses the question posed, showcasing an understanding of context and content.

- Coherence: The logical flow and clarity in the machine’s responses, which should be comparable to those of the human participant.

- Creativity: The ability of the machine to generate original thoughts or humorous responses that a typical human might produce in conversation.

- Emotional Intelligence: The machine’s capability to recognize and appropriately respond to emotional cues, which enhances the illusion of human-like interaction.

In conclusion, the methodology of conducting a Turing Test requires a well-defined structure involving clearly delineated roles, a controlled environment for interaction, and specific criteria for evaluating responses. This systematic approach helps in maintaining the validity of the test and provides insights into the machine’s capacity to emulate human-like intelligence.

The limitations and criticisms of the Turing Test as a measure of intelligence

The Turing Test, proposed by Alan Turing in 1950, is a pivotal concept in the field of artificial intelligence (AI) that aims to determine whether a machine can exhibit intelligent behavior indistinguishable from that of a human. Despite its historical significance, the Turing Test faces numerous criticisms regarding its effectiveness as a true measure of intelligence. Critics argue that the test does not adequately assess the depth of cognitive abilities or understanding, focusing instead on superficial mimicry of human conversation.

One major limitation of the Turing Test is its reliance on language as the primary medium of evaluation. By emphasizing linguistic ability, the test overlooks other critical aspects of intelligence such as reasoning, problem-solving, and emotional understanding. A machine might successfully imitate human conversation without possessing any genuine comprehension of the content it generates. As John Searle’s Chinese Room argument illustrates, a program could process symbols and generate appropriate responses without any understanding of the language itself. This highlights a fundamental flaw in equating linguistic performance with true intelligence.

Expert Criticisms and Alternative Measures

Experts have proposed various alternative measures for evaluating AI systems that address the inherent shortcomings of the Turing Test. These alternatives emphasize a broader understanding of intelligence beyond mere conversation.

Some of these proposed measures include:

- Capability Tests: Evaluating AI based on its ability to perform specific tasks, such as solving complex mathematical problems or playing strategic games like Chess and Go, where AI has achieved remarkable success.

- General Intelligence Assessments: Developing standardized tests that assess a machine’s reasoning, learning capacity, and adaptability in diverse situations, not solely based on conversational ability.

- Behavioral and Emotional Intelligence Metrics: Assessing machines on their ability to recognize and respond appropriately to human emotions, which is essential for applications in healthcare and customer service.

Several AI systems exemplify advanced capabilities while failing to pass the Turing Test. For instance, IBM’s Watson, which famously won on the quiz show Jeopardy!, demonstrates profound analytical abilities and a vast knowledge base but may struggle with open-ended conversation. Similarly, Google DeepMind’s AlphaGo showcases exceptional skill in mastering the game of Go, a feat requiring deep strategic thinking and pattern recognition, yet it does not engage in human-like dialogue. These examples underscore that passing the Turing Test is not necessarily indicative of a system’s overall intelligence or practical capabilities, further highlighting the need for more comprehensive evaluation frameworks.

The relevance of the Turing Test in contemporary AI applications

The Turing Test, conceptualized by Alan Turing in 1950, continues to hold considerable significance in the contemporary artificial intelligence (AI) landscape. As AI technologies have evolved and become integrated into various facets of life, the Turing Test serves as a foundational benchmark for evaluating machine intelligence, particularly in the realm of conversational agents and chatbots. Despite the emergence of more sophisticated evaluation metrics, the principles behind the Turing Test remain relevant as they address the core question of whether machines can exhibit human-like intelligence and interaction.

In today’s digital environment, the Turing Test is often perceived as both a philosophical inquiry and a practical evaluation tool. It highlights the ongoing challenges in achieving natural language understanding and the complexity of human communication. As organizations increasingly deploy AI systems for customer support, personal assistants, and interactive applications, the Turing Test serves as a guideline for assessing the effectiveness and user-friendliness of these technologies. The ability of an AI to engage users in a manner indistinguishable from a human can lead to enhanced user experiences and greater adoption of AI solutions.

Practical applications relevant to the Turing Test

Numerous AI applications still reference the Turing Test as a measure of success. The following areas illustrate its ongoing relevance:

- Chatbots and Virtual Assistants: AI-driven chatbots, such as those found on customer service platforms, are often evaluated based on their ability to converse naturally and resolve inquiries without human intervention.

- Social Media Bots: Bots that interact on platforms like Twitter and Facebook aim to mimic human behavior, often undergoing informal Turing Test assessments by users who gauge their conversational abilities.

- Therapeutic AIs: AI applications in therapeutic settings, like Woebot, strive to engage users empathetically, making the Turing Test a relevant measure of their effectiveness in human-like interaction.

- Language Translation Tools: Advanced AI translators that facilitate real-time conversations in different languages must also demonstrate an understanding of context and nuance akin to human communication.

Comparing the Turing Test to modern benchmarks, such as the Lovelace Test, reveals advancements in evaluating AI. The Lovelace Test emphasizes an AI’s ability to create something novel, challenging the machine to demonstrate creativity and originality rather than simply conversing like a human. While the Turing Test focuses on conversational indistinguishability, the Lovelace Test represents a shift toward gauging the broader capabilities of AI, reflecting a landscape that values creativity and problem-solving in addition to communication proficiency.

In summary, while the Turing Test may seem dated in an era of rapid advancements, it continues to offer valuable insights into the effectiveness of AI systems in human interaction. As new benchmarks emerge, the Turing Test remains a critical reference point that helps define the attributes of intelligent behavior in machines.

Future directions and potential evolutions of the Turing Test

As we continue to explore the intricate world of artificial intelligence, the Turing Test, conceived by Alan Turing in 1950, remains a benchmark for evaluating machine intelligence. However, with rapid advancements in AI technology, the relevance and structure of the Turing Test may need to evolve. This evolution will not only reflect the changing capabilities of AI but also the increasing complexity of human-machine interactions.

The Turing Test traditionally assesses a machine’s ability to exhibit intelligent behavior indistinguishable from that of a human. However, as AI systems become more sophisticated, the parameters and metrics for what constitutes “intelligence” are likely to shift. Future iterations of the Turing Test may incorporate multi-modal assessments, where machines are evaluated not just on conversational skills but also on emotional intelligence, creativity, and adaptability in various contexts. For example, AI systems could be challenged to engage in discussions about art, demonstrate empathy in simulated scenarios, or creatively problem-solve in real-time situations.

Research Areas Influencing Machine Intelligence Assessment

Various research areas are paving the way for the future of machine intelligence assessment. Each of these domains contributes to enriching our understanding of AI capabilities and refining evaluation methods.

- Natural Language Processing (NLP): Advancements in NLP allow machines to understand and generate human-like text. This area of research is crucial for enhancing conversational AI, making it more difficult to distinguish between human and machine interactions.

- Emotional AI: The development of emotional AI focuses on enabling machines to recognize and respond to human emotions, thus enhancing the quality of interactions and the overall user experience.

- Machine Learning and Deep Learning: Techniques in machine learning and deep learning are facilitating the creation of more complex models capable of nuanced decision-making, providing a richer basis for assessing intelligence.

- Robotics: The integration of AI with robotics allows for physical interactions, which could be a new dimension for the Turing Test, assessing how machines perform tasks in real-world environments.

- Ethics and AI Safety: Research in this domain is essential to ensure that future assessments of AI include considerations for ethical behavior and safety, leading to responsible AI deployment.

The evolution of the Turing Test may also lead to the development of alternative frameworks that can better capture the nuances of machine intelligence. Below is a summary of potential frameworks that could emerge:

| Framework | Description |

|---|---|

| Embodied Turing Test | Assesses AI through physical interaction in real-world scenarios, evaluating practical problem-solving and adaptability. |

| Emotional Turing Test | Measures an AI’s ability to understand and appropriately respond to human emotions during interactions. |

| Creative Turing Test | Evaluates AI based on its ability to produce original content in fields such as art, music, and literature. |

| Collaborative Turing Test | Tests AI by assessing its performance in collaborative tasks with humans, focusing on teamwork and communication skills. |

With these evolving frameworks and ongoing research, the assessment of machine intelligence is set to become a multidimensional endeavor, reflecting the complexities of human cognition and interaction.

Cross-cultural perspectives on the Turing Test and machine intelligence

The Turing Test has become a significant benchmark in assessing machine intelligence, yet its interpretation varies greatly across cultures. Different societies have unique philosophical, ethical, and practical frameworks that shape their understanding of artificial intelligence (AI) and the Turing Test. This divergence reflects deep-seated cultural values regarding intelligence, consciousness, and the role of technology in human life.

Cultural differences influence how intelligence and consciousness are defined and understood globally. For instance, Western cultures often equate intelligence with rational thought and analytical capabilities, emphasizing individualism. In contrast, many Eastern cultures may integrate emotional intelligence and collective well-being into their interpretations of intelligence. This leads to varying expectations for AI’s capabilities and roles in society. For example, in Japan, where robots are often seen as companions, machines are expected to demonstrate emotional understanding and social etiquette, reflecting a blend of technological advancement and cultural values.

Examples of AI perception in various cultures

The perception of AI varies significantly across different regions, shaped by historical, social, and cultural contexts.

- In the United States, the Turing Test is primarily viewed through a lens of competition and innovation. AI is often seen as a tool for enhancing human capabilities, driving economic growth, and addressing complex problems.

- In European countries, there is a more cautious approach to AI, focusing on ethical implications and regulatory frameworks. Conversations around the Turing Test often include concerns about privacy, accountability, and the potential for misuse of technology.

- In China, AI is embraced as a means of national development and technological leadership. The Turing Test is less about human-like interaction and more about efficiency and surveillance, reflecting the state’s interest in harnessing AI for social control and economic management.

- In the Middle East, perceptions of AI are mixed, with fascination towards technological advancement coexisting with skepticism due to concerns about job displacement and cultural preservation. The Turing Test is seen as an indicator of technological prowess but also raises questions about the implications for traditional values.

These examples underscore the varying perceptions of AI across different cultural landscapes, highlighting how societal contexts shape the understanding of intelligence and machine consciousness. Each perspective offers valuable insights into the complex relationship between humanity and technology, illustrating that the Turing Test is not just a scientific measure but also a cultural artifact.

The role of the Turing Test in the development of ethical AI standards

The Turing Test, formulated by Alan Turing in 1950, serves not only as a measure of artificial intelligence’s ability to exhibit human-like behavior but also as a critical framework influencing the ethical standards of AI development. This interaction between humans and machines raises significant ethical questions regarding accountability, transparency, and the implications of creating entities that could potentially mimic human cognition.

The Turing Test’s relevance extends beyond mere imitation; it challenges developers to consider the moral responsibilities associated with creating intelligent systems. As AI technologies strive to pass this test, developers must confront ethical considerations that include the potential for misuse, biases in AI algorithms, and the societal impacts of these technologies. The pursuit of AI that can convincingly perform human-like tasks necessitates an ethical framework that safeguards against harmful consequences.

Accountability and Transparency in AI Development

Ensuring accountability and transparency in AI systems is paramount, especially for those aiming to pass the Turing Test. The complexity and opacity of many AI algorithms can lead to a lack of trust among users and stakeholders. Consequently, developers and organizations must implement clear protocols that define accountability structures and maintain transparency throughout the development process.

1. Accountability Structures:

Developing AI systems that abide by ethical standards includes establishing clear accountability mechanisms. This involves defining the responsibilities of developers, organizations, and users in the event of an AI failure or harmful behavior. Accountability frameworks help to ensure that there are consequences for neglecting ethical practices.

2. Transparent Algorithms:

Transparency in AI algorithms is crucial for understanding decision-making processes. By making AI models understandable and accessible, developers can foster trust and allow for easier identification of biases or ethical concerns. This is particularly important when AI systems operate in critical areas such as healthcare, finance, and criminal justice.

3. Stakeholder Involvement:

Engaging various stakeholders, including ethicists, policymakers, and the public, can enhance transparency and accountability. Collaborative discussions can lead to the establishment of ethical guidelines that reflect a diverse range of perspectives.

Regulatory bodies and organizations focused on ethical AI practices play a vital role in enhancing these standards. For example, the European Commission has set forth guidelines for trustworthy AI, emphasizing human oversight, non-discrimination, and transparency. The IEEE Global Initiative on Ethics of Autonomous and Intelligent Systems also promotes ethical considerations in AI, advocating for inclusivity and accountability in the development phase.

In summary, the Turing Test not only acts as a benchmark for AI performance but also invites critical discourse surrounding the ethical implications of creating intelligent systems. By fostering accountability and transparency, developers can work towards creating AI technologies that align with societal values and ethical standards.

Final Thoughts

In conclusion, the Turing Test serves as both a historical landmark and a contemporary benchmark for discussions surrounding artificial intelligence. While it sparks debates about the nature of consciousness and intelligence, it also points to the need for evolving standards in the evaluation of AI. As we continue to innovate and challenge these concepts, the future may hold new frameworks and insights that will redefine our understanding of what it means for machines to truly think and engage with humanity.

User Queries

What is the main purpose of the Turing Test?

The main purpose of the Turing Test is to determine whether a machine’s behavior can be indistinguishable from a human’s, thereby assessing its ability to exhibit intelligent behavior.

Who can pass a Turing Test?

Both humans and advanced AI systems are designed to participate in the Turing Test, but only those that can convincingly mimic human responses can be said to ‘pass’ it.

Why is the Turing Test criticized?

The Turing Test is criticized for evaluating behavior rather than true understanding or consciousness, leading some to argue it doesn’t effectively measure real intelligence.

Are there alternatives to the Turing Test?

Yes, there are several alternatives, such as the Lovelace Test, which focuses on a machine’s creativity and ability to generate original content, challenging how we measure intelligence.

How has the Turing Test influenced AI development?

The Turing Test has influenced AI development by providing a framework for assessing machine capabilities and encouraging advancements in natural language processing and conversational AI.