Natural Language Processing (NLP) sets the stage for this enthralling narrative, offering readers a glimpse into a story that is rich in detail and brimming with originality from the outset.

This fascinating field merges linguistics, computer science, and cognitive psychology, showcasing its historical evolution and significance in artificial intelligence. As NLP continues to grow, it plays a pivotal role in various technologies we encounter daily, from voice-activated assistants to language translation services. Delving into its branches and applications reveals a wealth of possibilities that enhance both personal and professional experiences, making it an essential topic in today’s tech-driven world.

Natural Language Processing as a Field of Study

Natural Language Processing (NLP) has evolved into a vital component of artificial intelligence, shaping how machines understand and interact with human language. The historical development of NLP can be traced back to the mid-20th century, when early attempts at machine translation and text analysis laid the groundwork for modern computational linguistics. Significant milestones include the advent of rule-based systems, the introduction of statistical methods in the 1990s, and more recently, the rise of deep learning algorithms, which have dramatically enhanced the capabilities of NLP applications. The significance of NLP within artificial intelligence extends beyond mere text processing; it enables machines to comprehend context, sentiment, and even subtleties of language, paving the way for more sophisticated interactions between humans and machines.

The interdisciplinary nature of NLP is one of its most defining characteristics, integrating insights from linguistics, computer science, and cognitive psychology. Linguistics provides the foundational theories regarding language structure, semantics, and syntax, which are crucial for developing algorithms that can analyze and generate human language. Computer science contributes the necessary computational techniques and frameworks that allow for large-scale data processing and model training, while cognitive psychology informs our understanding of how humans process language and communicate, which can be emulated in machine learning systems. This confluence of disciplines enhances the effectiveness of NLP applications, making them more intuitive and user-friendly.

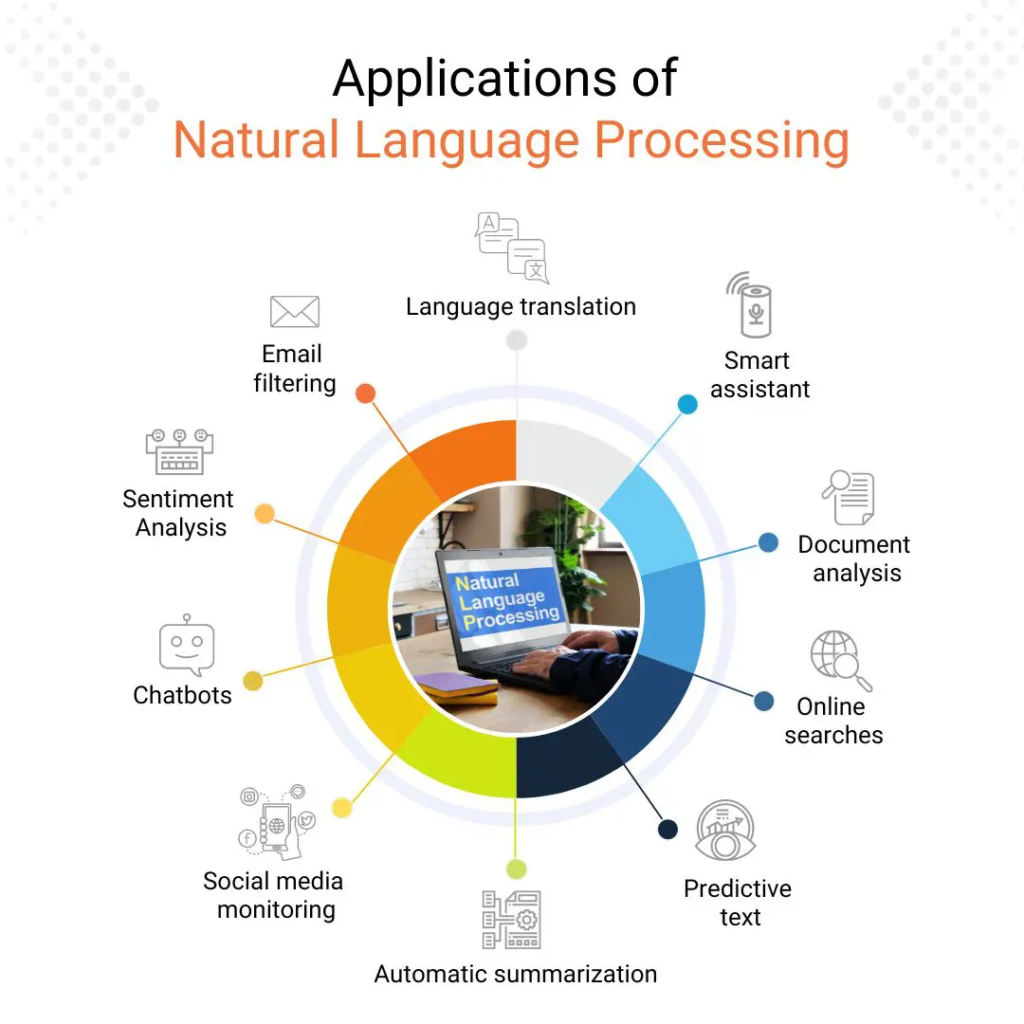

Branches and Applications of NLP

The branches of NLP have expanded rapidly, resulting in a wide array of applications that impact various sectors. These include but are not limited to the following:

- Text Analysis: This involves extracting meaningful information from unstructured text, such as summarization or sentiment analysis. For example, businesses use text analysis to gauge customer sentiments in reviews and feedback.

- Speech Recognition: NLP technologies convert spoken language into text. Virtual assistants like Siri and Google Assistant utilize this technology to facilitate user commands, enhancing convenience in daily tasks.

- Machine Translation: Tools such as Google Translate utilize NLP to convert text from one language to another, breaking down language barriers and fostering global communication.

- Chatbots and Virtual Assistants: These applications leverage NLP to understand and respond to user queries in a conversational manner, improving customer service in various industries.

- Information Retrieval: NLP aids in enhancing search engines by allowing them to understand user queries better and deliver more relevant results based on context and intent.

The applications of NLP are vast, impacting fields such as healthcare, finance, education, and entertainment. For instance, in healthcare, NLP algorithms can analyze clinical notes to improve patient care by identifying relevant information that aids in diagnosis and treatment plans. As technology advances, the role of NLP continues to evolve, providing innovative solutions that enhance communication and understanding in an increasingly digital world.

Core Techniques in Natural Language Processing

Natural Language Processing (NLP) encompasses a broad spectrum of techniques that enable machines to understand and interpret human language. By applying a range of methods, NLP not only facilitates the automation of language-related tasks but also enhances our interactions with technology. The core techniques in NLP can be categorized into several fundamental processes, including tokenization, stemming, and lemmatization, alongside various machine learning approaches that drive these advancements.

Fundamental Techniques of NLP

The foundational techniques in NLP are crucial for breaking down and processing natural language data effectively. Each technique serves a specific purpose in the overall process.

- Tokenization: This process involves splitting text into smaller units, or tokens, which can be words, phrases, or symbols. Tokenization helps in analyzing the structure and meaning of sentences by treating each token as a discrete entity.

- Stemming: Stemming reduces words to their base or root form. For example, the words “running,” “runner,” and “ran” can be stemmed to “run.” This technique simplifies the analysis by focusing on the core meaning of words, although it may not always produce valid lexical terms.

- Lemmatization: Similar to stemming, lemmatization also aims to reduce words to their base form but does so by considering the context and converting words into their dictionary form. For instance, “better” becomes “good,” which is a valid word, unlike stemming approaches that may yield non-words.

Machine Learning Methods in NLP

Machine learning plays a pivotal role in enhancing NLP capabilities, utilizing both supervised and unsupervised learning methods to analyze and generate language data.

- Supervised Learning: In supervised learning, models are trained using labeled datasets where the desired output is provided. Techniques like classification and regression are employed to predict outcomes and categorize text. Example applications include spam detection and sentiment analysis, where models learn from examples to make predictions.

- Unsupervised Learning: Unsupervised learning involves training models on data without explicit labels. This approach is used for clustering and topic modeling, revealing hidden patterns or groupings within datasets. Techniques like Latent Dirichlet Allocation (LDA) allow for extracting topics from a collection of texts without pre-defined categories.

Transformative Impact of Deep Learning on NLP

Deep learning has profoundly transformed the landscape of Natural Language Processing, enabling more sophisticated analyses and applications than traditional models could provide. By utilizing multi-layered neural networks, deep learning algorithms can automatically learn representations of data at varying levels of abstraction.

One of the most significant contributions of deep learning is the advent of models such as Recurrent Neural Networks (RNNs) and Transformers. RNNs are particularly effective for sequential data, making them suitable for tasks like language modeling and speech recognition. However, the introduction of Transformers has revolutionized NLP by allowing for parallel processing of data sequences, leading to substantial improvements in efficiency and accuracy.

Deep learning models can capture complex patterns and dependencies within textual data, enabling advancements in translation, summarization, and even creative writing. For instance, models like OpenAI’s GPT series have showcased remarkable performance in generating coherent text that closely resembles human writing. These models are trained on vast amounts of text data, allowing them to understand context, tone, and structure with unprecedented accuracy.

Moreover, the emergence of transfer learning in deep learning, particularly with models pre-trained on extensive datasets, has made it easier to adapt these models to specific tasks with minimal additional training. This approach has significantly reduced the resources and time required for deploying NLP applications, thereby democratizing access to advanced language processing capabilities.

The synergy of deep learning with NLP continues to pave the way for innovative applications, transforming how we interact with machines and opening new avenues for research and development in language technology.

Challenges in Natural Language Processing

Natural Language Processing (NLP) is a fascinating and rapidly evolving field that aims to enable machines to understand and respond to human language in a meaningful way. However, numerous challenges hinder the effectiveness of NLP systems. These challenges stem from the inherent complexity of human language, which includes ambiguity, context understanding, and the diversity of languages spoken worldwide.

Ambiguity and Context Understanding

Ambiguity is one of the primary hurdles in NLP. Words can have multiple meanings based on their usage in context, leading to potential misinterpretations by machines. For instance, the word “bank” can refer to a financial institution or the side of a river, depending on the sentence context. Understanding this requires more than just analyzing text at a surface level; it necessitates comprehension of the broader context, including prior sentences and the intended sentiment of the speaker.

Context understanding is also crucial for capturing the nuances of language. For example, consider the phrase “I love this.” Without additional context, it is impossible to determine whether the speaker is referring to a food item, a piece of art, or a person. NLP systems must be designed to consider various contextual factors, such as previous interactions and the topic of conversation, to improve accuracy and reduce ambiguity.

Language Diversity and Grammatical Structures

The diversity of languages presents another significant challenge for NLP. Each language has its own syntax, semantics, and idiomatic expressions. Languages such as Chinese, which relies heavily on context and tone, and Arabic, known for its complex morphology, pose more significant challenges compared to languages with simpler grammatical structures like English. For instance, the inflectional nature of Arabic can lead to a vast number of word forms derived from a single root. This complexity demands that NLP systems be tailored specifically to the intricacies of each language, which can be resource-intensive and time-consuming.

Data quality and availability also play a critical role in the effectiveness of NLP systems. High-quality, annotated datasets are essential for training machine learning models. If the data is noisy or unrepresentative, the NLP system’s performance will suffer significantly. Inadequate data can lead to models that misinterpret language, fail to understand subtle nuances, or produce irrelevant outputs. For example, a sentiment analysis model trained on biased data may inaccurately classify neutral statements as positive or negative, ultimately misleading users.

Furthermore, the availability of diverse data sources is crucial for creating robust NLP systems. A system trained only on English text may perform poorly when applied to other languages or dialects. To address these challenges, developers often resort to techniques like transfer learning, which allows models trained on one language to adapt to another with limited data. However, this approach requires careful consideration of the linguistic properties of both the source and target languages to mitigate issues arising from language diversity.

Applications of Natural Language Processing in Industry

Natural Language Processing (NLP) has revolutionized various industries by enhancing communication, decision-making, and consumer engagement. The versatility of NLP allows businesses to analyze vast amounts of textual data, improve efficiencies, and provide more personalized experiences for their customers. Here, we explore the impactful applications of NLP in healthcare, finance, and customer service, complemented by real-world examples.

Impact of NLP in Healthcare, Finance, and Customer Service

NLP is transforming how industries operate by automating processes and providing insights from unstructured data. In the healthcare sector, NLP algorithms analyze clinical notes and medical literature to support diagnosis and treatment recommendations. For instance, IBM Watson Health employs NLP technologies to analyze patient records, assisting doctors in identifying potential treatment plans based on patient history and current medical research.

In finance, NLP is used for sentiment analysis, enabling firms to gauge public sentiment towards stocks or products by analyzing news articles, social media, and earnings calls. A concrete example includes Bloomberg’s terminal, which integrates NLP to provide real-time insights into market sentiments, thus helping traders make informed decisions.

For customer service, NLP powers chatbots and virtual assistants that enhance user experiences by providing instant responses to inquiries. Companies like Amazon and Google leverage NLP in their virtual assistants, allowing users to manage tasks through voice commands and receive immediate assistance. This not only improves efficiency but also helps companies reduce operational costs while enhancing customer satisfaction.

Role of Sentiment Analysis in Understanding Consumer Behavior and Market Trends

Sentiment analysis is pivotal in understanding consumer perceptions and market trends, as it assesses the emotions expressed in textual data. By analyzing social media posts, reviews, and feedback, companies can gain valuable insights into consumer preferences and behaviors. This approach allows businesses to tailor their marketing strategies, product development, and customer engagement initiatives.

The sentiment analysis process typically involves the following steps:

- Data Collection: Aggregating large volumes of data from various sources, including social media platforms, customer reviews, and forums.

- Text Processing: Cleaning and preprocessing the data to remove noise and irrelevant information, enabling the analysis to focus on sentiment-related content.

- Sentiment Classification: Utilizing machine learning algorithms to classify the sentiment as positive, negative, or neutral, which aids in categorizing consumer opinions.

- Insights Generation: Interpreting the classified data to identify trends, patterns, and consumer sentiments toward products or services.

The significance of sentiment analysis becomes evident when companies can swiftly adapt to market changes. For instance, during a product launch, a quick analysis of social media reactions can inform a company about customer approval or concerns, allowing immediate adjustments to marketing strategies. By leveraging sentiment analysis, businesses can make data-driven decisions, thus gaining a competitive edge in the market.

Ethical Considerations in Natural Language Processing

The rapid advancement of Natural Language Processing (NLP) technologies raises significant ethical considerations that must be carefully navigated. As NLP systems become increasingly integrated into daily life, issues surrounding bias, fairness, transparency, and data privacy become paramount. Understanding these implications is crucial for developers, users, and policymakers alike.

Bias and Fairness in NLP Algorithms

NLP algorithms are often trained on large datasets that may inadvertently reflect societal biases. This can lead to unfair treatment of certain groups, perpetuating stereotypes and reinforcing inequalities. For example, a study by the Stanford NLP group found that automated systems can associate certain ethnicities with negative sentiments, leading to discriminatory outcomes in applications such as hiring tools or law enforcement chatbots.

Several key aspects highlight the ethical concerns regarding bias and fairness:

- Data Representation: The datasets used to train NLP models often lack diversity, which can result in models that do not accurately represent all populations. This can lead to misinterpretations and skewed outputs.

- Algorithmic Transparency: Many NLP systems operate as “black boxes,” making it difficult to understand how decisions are made. Without transparency, it is challenging to identify and rectify biased outcomes.

- Accountability: Developers and organizations must take responsibility for the effects of their algorithms. Implementing fairness audits and bias mitigation techniques can help address ethical challenges.

Societal Impact on Privacy and Data Security

The increasing reliance on NLP applications raises significant concerns regarding privacy and data security. These applications often require substantial amounts of personal data to function effectively, leading to potential vulnerabilities. For instance, chatbots and virtual assistants frequently process sensitive information, which, if inadequately secured, can be vulnerable to breaches.

The societal impact of these applications can be considered through several key points:

- Data Collection Practices: Many NLP systems collect user data to improve their functionality. This necessitates strict guidelines to ensure consent and transparency regarding how user data is utilized.

- Data Anonymization: While anonymization techniques aim to protect user identities, they are not foolproof. Techniques such as de-anonymization can expose individuals’ private information, leading to significant privacy concerns.

- Regulatory Frameworks: The deployment of NLP technologies must occur within a strong regulatory framework that ensures user privacy and data protection. Laws such as GDPR in Europe set important precedents for data handling practices.

“In the age of information, safeguarding personal data is not just a preference but a necessity for maintaining trust in technology.”

Future Trends in Natural Language Processing

As Natural Language Processing (NLP) continues to evolve, several key trends are emerging that promise to reshape how machines understand and interact with human language. The field is advancing rapidly, driven by the need for more sophisticated language models, enhanced multilingual capabilities, and integration with cutting-edge technologies. This discussion will explore these advancements and their implications for future human-computer interactions.

Advancements in Multilingual Processing and Real-Time Translation

The globalization of communication has necessitated advancements in multilingual processing and real-time translation. Modern NLP systems are increasingly capable of understanding and generating text in multiple languages, bridging communication gaps that once hindered international collaboration. With the integration of deep learning techniques, models like Google’s Transformer architecture have significantly improved the accuracy of translations, making them more context-aware and nuanced.

Real-time translation applications are becoming commonplace, empowering users to communicate seamlessly across different languages. For instance, tools like Google Translate and Microsoft Translator now offer instant translations in conversations through voice recognition, allowing people from diverse linguistic backgrounds to engage with each other effortlessly. Additionally, recent innovations in NLP have introduced features such as contextual translation, where the software considers the surrounding text to provide more appropriate translations.

Integration of NLP with Augmented Reality and Internet of Things

The intersection of NLP with Augmented Reality (AR) and the Internet of Things (IoT) is set to redefine user interaction with technology. By combining NLP with AR, applications can enhance the user experience by providing context-sensitive information in real-time. For example, imagine using AR glasses that translate a sign in a foreign language as you look at it, turning everyday experiences into enriched, informative encounters.

Similarly, IoT devices equipped with NLP capabilities can facilitate more natural interactions. Smart home assistants, for instance, can interpret user commands more effectively, allowing for smoother control over various devices. As these technologies converge, the potential for creating intelligent environments that understand and respond to human language will broaden significantly.

Innovations in NLP are poised to transform human-computer interactions profoundly. By making interactions more intuitive, users can communicate with machines as they would with another person, leading to a more natural and engaging experience. For instance, conversational agents and chatbots will become increasingly sophisticated, understanding context, sentiment, and even humor, thereby providing a more human-like interaction.

As these advancements unfold, we can expect to see a significant reduction in language barriers and an increase in accessibility for users across different backgrounds. Moreover, the ability to process and respond to language in real-time will likely enhance various sectors, including education, customer service, and healthcare, paving the way for a more connected world where technology complements human communication seamlessly.

Final Wrap-Up

In conclusion, the journey through Natural Language Processing (NLP) highlights its transformative power across industries and its potential for future advancements. As we navigate the challenges and ethical considerations of this innovative field, it’s clear that NLP will continue to shape the way we communicate and interact with technology. Embracing these developments will not only improve efficiency but also foster deeper connections between humans and machines.

FAQ Section

What is the main purpose of Natural Language Processing (NLP)?

The main purpose of NLP is to enable computers to understand, interpret, and generate human language, facilitating seamless interaction between humans and machines.

How does NLP differ from traditional programming?

NLP differs from traditional programming by focusing on language understanding and generation rather than explicit coding instructions, allowing systems to learn from data and adapt to user input.

What are some real-world examples of NLP applications?

Real-world applications include chatbots for customer service, voice assistants like Siri and Alexa, and automated translation tools such as Google Translate.

What challenges does NLP face regarding language diversity?

NLP faces challenges in processing languages with different grammatical structures, idiomatic expressions, and cultural nuances, which can lead to misunderstandings and inaccuracies.

How is sentiment analysis utilized in businesses?

Sentiment analysis is used by businesses to gauge customer opinions and preferences, helping them make informed decisions about products and marketing strategies.