Beginning with Algorithmic Bias, the narrative unfolds in a compelling and distinctive manner, drawing readers into a story that promises to be both engaging and uniquely memorable. Algorithmic bias refers to systematic and unfair discrimination that occurs when algorithms produce biased results due to flawed data, human biases, or design flaws. As technology increasingly permeates our daily lives, understanding algorithmic bias becomes essential, especially in sectors like healthcare, finance, and criminal justice, where biased decisions can have serious repercussions. Historical contexts reveal how societal inequalities have seeped into technological frameworks, making it vital to address these issues head-on.

Throughout this discussion, we will explore the origins and causes of algorithmic bias, its implications for social justice, and the strategies needed to mitigate its effects. With real-world examples and a focus on the importance of ethical AI practices, we aim to illuminate the often-overlooked consequences of algorithmic bias and the steps we can take to create fairer technologies.

Understanding Algorithmic Bias in Technology

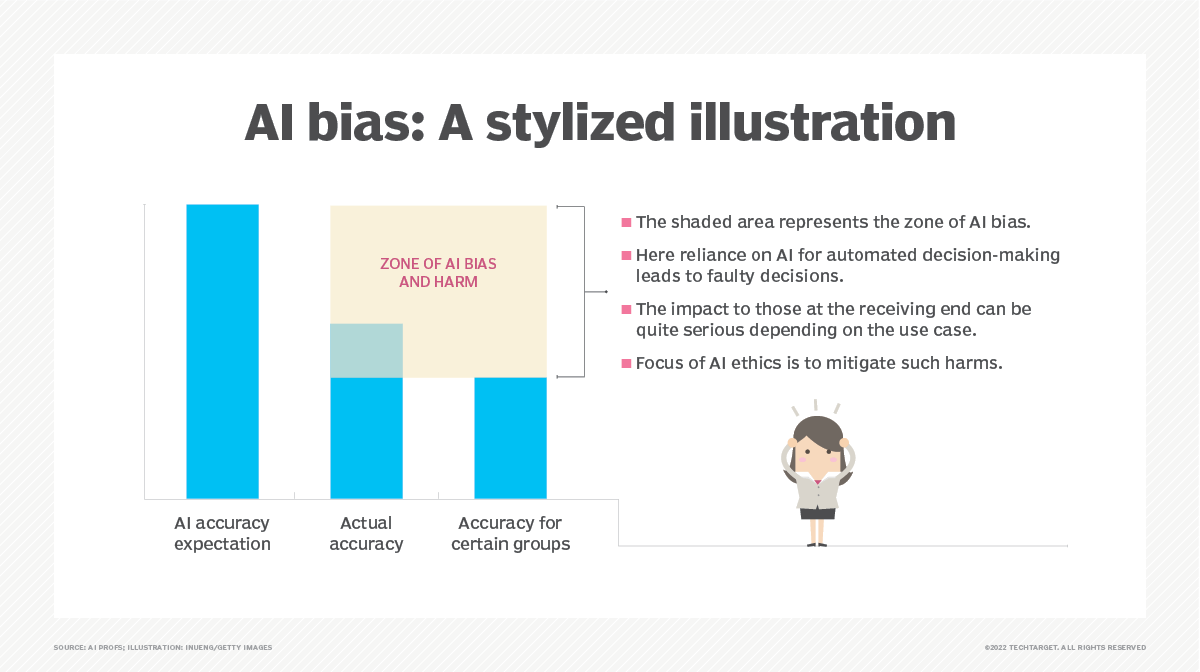

Algorithmic bias refers to systematic and unfair discrimination that emerges in the outputs of algorithms due to prejudiced assumptions or flawed data. As technology becomes increasingly integrated into our daily lives, algorithmic bias has grown in relevance. It can significantly impact decisions made in various sectors, from hiring practices to law enforcement. With algorithms now wielding considerable power in shaping opinions and determining opportunities, understanding their implications is more crucial than ever.

The roots of algorithmic bias date back to the historical inequities that exist within the datasets used for training algorithms. Often, these datasets are reflective of societal biases, which can stem from cultural, economic, or racial disparities. Consequently, when algorithms are trained on such biased data, they can perpetuate discrimination rather than alleviate it. The implications for society are profound—when biases are encoded into technology, they can lead to unfair treatment of individuals based on race, gender, or socioeconomic status. This has raised ethical concerns and sparked discussions around accountability and transparency in algorithm design.

Real-world examples shed light on the impact of algorithmic bias across various fields. In healthcare, algorithms used for determining eligibility for treatments have been found to favor certain demographic groups, often based on historical health data that underrepresents minority populations. For instance, a widely used risk assessment tool in healthcare was found to underestimate the risk of complications in Black patients, leading to inappropriate care recommendations.

In finance, algorithms used for credit scoring and lending decisions can inadvertently reflect socioeconomic inequalities. Certain demographic groups may be disadvantaged due to historical lending patterns or biases present in the data used to train these algorithms. This can result in higher rates of loan denials and less favorable terms for individuals from those groups.

The criminal justice system also faces challenges with algorithmic bias. Predictive policing tools, which analyze crime data to predict future criminal activity, can reinforce existing biases. For example, if historical arrest data disproportionately reflects certain racial groups, the algorithm may overly focus police resources in those communities, leading to a cycle of increased scrutiny and enforcement.

These examples underscore the urgent need for a critical examination of the algorithms that increasingly govern our lives. The recognition of algorithmic bias as a significant issue can guide the development of more equitable and ethical technological solutions, which can help to mitigate the negative impact of these biases on society.

The Causes of Algorithmic Bias in Machine Learning Models

Algorithmic bias has emerged as a significant concern in the realm of artificial intelligence and machine learning. The quest for fairness in technology is often undermined by inherent biases that can stem from various sources. Understanding the causes of these biases is crucial for developing more equitable machine learning models. Notably, the quality of data and representation issues play pivotal roles in perpetuating algorithmic bias.

Data quality stands out as a primary factor contributing to algorithmic bias. Inaccurate, incomplete, or outdated data can misinform the learning process of algorithms, leading to skewed interpretations and outcomes. For instance, if a dataset used to train a facial recognition model predominantly features lighter-skinned individuals, the model may struggle to accurately identify darker-skinned faces. This form of bias in the training set directly translates into biased performance when the model is deployed in real-world scenarios.

Another critical element is representation issues within the training data. When certain groups are underrepresented or overrepresented, algorithms are more likely to reflect those disparities. For example, a predictive policing algorithm trained on historical crime data might disproportionately target minority communities if those populations were overrepresented in past police reports. This perpetuates a cycle of bias where the algorithm reinforces existing societal prejudices rather than alleviating them.

Impact of Biased Training Datasets

The consequences of biased training datasets can be severe, leading to unfair outcomes that disproportionately affect certain groups. When algorithms are trained on datasets that reflect historical inequalities, they can inadvertently propagate these biases in their predictions and decisions. The ramifications can be grave, as seen in various instances across sectors such as hiring, law enforcement, and healthcare.

For instance, an algorithm used for recruitment that learns from historical hiring data may favor candidates who resemble those previously hired, effectively sidelining qualified applicants from diverse backgrounds. This not only reinforces the existing biases within organizations but also limits opportunities for underrepresented groups.

In the realm of healthcare, machine learning models trained on biased data can result in misdiagnoses or inadequate treatment recommendations for certain populations. A study found that an algorithm used to assess the risk of health complications was less accurate for Black patients, largely due to a training dataset that poorly represented their medical history. As a result, these biases can lead to significant disparities in health outcomes, further entrenching inequality.

Human bias is another significant factor that can be inherited by algorithms. Developers and data scientists may unknowingly embed their own biases into the models due to their subjective interpretations of data. This can occur at various stages of model development, from data collection and selection to feature engineering and algorithm design. An example includes the use of biased labels in supervised learning where the judgements of human annotators can reflect societal biases, which then becomes a part of the training dataset.

Moreover, biases can be systemic, arising from broader societal norms and behaviors. When machine learning models are deployed in sensitive areas such as criminal justice or lending, they can reinforce existing societal inequalities. The outcomes of biased algorithms may lead to unfair treatment, such as higher loan denial rates for specific demographics, further alienating already marginalized communities.

In conclusion, the causes of algorithmic bias are multifaceted, anchored in data quality, representation issues, and human biases. Understanding these factors is essential for creating fairer and more reliable machine learning systems that do not perpetuate existing societal inequities.

The Impact of Algorithmic Bias on Social Justice

Algorithmic bias poses significant risks to social justice, particularly for marginalized communities who already face systemic inequalities. When algorithms designed to make decisions in areas like hiring, law enforcement, and lending are influenced by biased data or flawed programming, the implications can be devastating. These biased algorithms not only affect individual lives but also perpetuate broader societal injustices, reinforcing existing disparities and obstructing efforts toward equity and inclusion.

The perpetuation of systemic inequalities through biased algorithms stems from their foundational reliance on historical data, which often reflects past prejudices. For instance, if an algorithm is trained on data that contains historical patterns of discrimination against certain ethnic groups, it may inadvertently replicate these biases in its decision-making processes. This can lead to unfair treatment in critical areas such as criminal justice, where biased algorithms may predict higher recidivism rates for minority groups based solely on historical arrest data, not actual behavior. The implications here are profound, as lives are affected by decisions that lack transparency and accountability.

Case Studies Illustrating Detrimental Effects

Several notable case studies highlight the real-world consequences of algorithmic bias, showcasing its negative impact on specific populations. One significant example involves the use of predictive policing algorithms. These systems often rely on historical crime data, which disproportionately reflects policing practices in minority neighborhoods. As a result, areas with high police presence due to past biases may see increased surveillance and aggressive policing tactics, leading to a cycle of over-policing and community mistrust.

Another case involves hiring algorithms used by large tech companies. In 2018, it was revealed that Amazon had to scrap an algorithmic hiring tool because it favored male candidates. The algorithm was trained on resumes submitted over a ten-year period, which were predominantly from men, leading to a bias against women applicants. This incident underscores how reliance on historical data can entrench gender disparities in the workforce, limiting opportunities for qualified individuals based solely on their gender.

Healthcare is also not immune to the effects of algorithmic bias. A study published in 2019 found that an algorithm used to determine eligibility for healthcare programs exhibited racial bias, misidentifying Black patients as less sick than their white counterparts, even when they had similar health needs. This bias can result in minority patients receiving inadequate care, exacerbating health disparities and undermining the principle of equitable access to healthcare services.

Understanding these case studies is crucial for recognizing the broader implications of algorithmic bias on social justice. They serve as stark reminders that without intervention, algorithms can entrench existing inequalities, making it difficult for marginalized communities to achieve fair treatment in society. Addressing algorithmic bias requires a comprehensive approach that includes diverse data sets, inclusive algorithm design, and constant monitoring to ensure fairness and accountability in automated decision-making processes.

Strategies for Mitigating Algorithmic Bias in AI Development

Mitigating algorithmic bias is crucial in developing fair and equitable AI systems. As AI technologies become increasingly integrated into various facets of our lives, understanding and addressing the biases that can arise during the design and implementation phases is essential. This discussion will explore effective strategies and best practices aimed at minimizing algorithmic bias, highlighting the importance of diverse teams and providing a clear overview of specific methods.

Best Practices for Bias Mitigation

Implementing sound practices during the AI development lifecycle is fundamental to reducing bias. It is essential to adopt a multi-faceted approach that considers the entire lifecycle of AI models. The following strategies are particularly effective:

1. Data Diversity and Quality: Ensuring that the training data is comprehensive and representative is paramount. Diverse datasets help capture a wide array of perspectives and prevent the system from inheriting historical biases. For example, Google’s AI ethics team stresses the importance of including underrepresented groups to avoid skewed outcomes.

2. Bias Auditing: Regularly auditing AI models for bias can help identify and rectify issues early in the process. Techniques like fairness metrics can be applied to assess how different demographic groups are treated by the algorithm. Companies like IBM have developed tools specifically designed for bias detection in AI.

3. Human-in-the-Loop Approaches: Integrating human oversight into AI decision-making processes ensures that subjective judgments can be made where necessary. This can be particularly important in sensitive applications, such as hiring or law enforcement, where nuanced understanding is required.

4. Transparent Algorithms: Promoting transparency in algorithm design helps stakeholders understand how decisions are made. This can involve open-sourcing code or providing clear documentation on how algorithms function, which enhances accountability and trust.

5. Iterative Testing and Feedback: Employing an iterative approach allows for continuous improvement of AI systems. Feedback from users, especially those from diverse backgrounds, can provide invaluable insights that help refine the system and reduce bias.

6. Collaborative Development: Engaging with external experts or communities can provide fresh perspectives and enhance the understanding of bias in AI systems. Collaborations with sociologists, ethicists, and community representatives can lead to more informed AI design choices.

7. Training for Developers: Educating developers about bias, its sources, and its implications is crucial. Workshops and training programs can raise awareness and equip teams with the necessary tools to recognize and mitigate bias.

These methods collectively contribute to a more equitable technological landscape, showcasing the importance of thoughtful design and implementation processes.

Importance of Diverse Teams

Diversity within development teams plays a pivotal role in mitigating algorithmic bias. Teams that are composed of individuals with varying backgrounds, experiences, and perspectives are better equipped to recognize and address potential biases in AI systems. Such teams can ensure that the nuances of different populations are considered during the development process.

The effectiveness of diverse teams stems from their ability to approach problems from multiple angles, leading to more innovative and effective solutions. When varied perspectives are included, it helps create AI systems that are aligned with the needs of a broader audience, ultimately fostering inclusivity. A notable example is the collaboration between various tech companies and minority-focused organizations to develop AI tools that better serve underrepresented communities.

Summary of Strategies for Bias Mitigation

The following table summarizes various strategies along with their potential effectiveness in reducing algorithmic bias:

| Strategy | Potential Effectiveness |

|---|---|

| Data Diversity and Quality | High – Ensures representation and reduces historical bias. |

| Bias Auditing | Medium – Identifies issues, but requires ongoing commitment. |

| Human-in-the-Loop Approaches | High – Provides oversight and context to algorithmic decisions. |

| Transparent Algorithms | Medium – Increases accountability but may not directly reduce bias. |

| Iterative Testing and Feedback | High – Continuous improvement helps adapt to changing needs. |

| Collaborative Development | Medium – Broadens understanding, but depends on engagement. |

| Training for Developers | High – Empowers teams to recognize and address bias effectively. |

These strategies create a robust framework for addressing algorithmic bias, reinforcing the need for active measures in the development of AI systems. By prioritizing these approaches, organizations can contribute to the creation of technology that reflects fairness and equity.

The Role of Policy and Regulation in Addressing Algorithmic Bias

The increasing reliance on algorithms in various sectors has raised significant concerns surrounding algorithmic bias, necessitating intervention from government and regulatory bodies. The role of these entities is crucial as they work to create a framework that ensures fairness and accountability in algorithmic decision-making. Crafting effective policies and regulations requires a nuanced understanding of technology, social implications, and ethical considerations.

Regulatory bodies around the world are beginning to address algorithmic bias through a variety of legislative measures. For instance, the European Union’s General Data Protection Regulation (GDPR) includes provisions that enhance user rights concerning automated decisions, emphasizing transparency and accountability. The AI Act, proposed by the EU, seeks to establish a legal framework for artificial intelligence, categorizing AI applications based on risk levels and imposing stricter regulations on high-risk systems. In contrast, the United States has seen a more fragmented approach, with states like California enacting laws that focus on data privacy and algorithmic accountability, while federal legislation remains less cohesive.

International Approaches to Regulating Algorithmic Bias

Comparative analysis of international strategies reveals varying degrees of effectiveness and adaptability. Countries like Canada and the UK have implemented frameworks that emphasize ethical AI and transparency. The UK’s AI Strategy includes guidelines that promote responsible AI use and the mitigation of bias, focusing on collaboration between public and private sectors. In contrast, China’s approach is more focused on state control, emphasizing algorithmic governance that aligns with national interests, but raises concerns regarding surveillance and data privacy.

The effectiveness of these approaches can often be traced back to their foundational philosophies:

- Transparency: Policies that require companies to disclose how algorithms work and the data used tend to foster greater accountability.

- Accountability: Regulations that impose penalties for biased outcomes lead to more cautious use of algorithms by organizations.

- Inclusivity: Frameworks encouraging diverse data sets help mitigate biases, ensuring fairer outcomes for marginalized groups.

Policymakers face numerous challenges when creating effective regulations for emerging technologies. The rapid pace of technological advancement often outstrips the speed at which regulations can be developed. Additionally, the complexity of algorithms presents difficulties in understanding and regulating them effectively.

“Effective regulation of algorithmic systems requires a balance between innovation and accountability.”

Furthermore, there is the challenge of aligning diverse stakeholder interests, including private companies seeking profit and the public’s demand for fairness and transparency. As a result, developing comprehensive and adaptive policies remains a persistent struggle in the face of evolving technology.

The Future of Algorithmic Bias and Ethical AI

As we look toward the future of algorithmic bias and ethical AI, it’s clear that this landscape is rapidly evolving. The integration of artificial intelligence into various sectors has prompted numerous discussions about fairness, accountability, and transparency. Addressing algorithmic bias is not just a technical challenge; it is an ethical imperative that shapes trust in AI systems. Companies and developers must prioritize ethical practices to ensure equitable outcomes across diverse populations while also adapting to the advancements in technology that seek to mitigate these biases.

Emerging Technologies and Methodologies

A variety of emerging technologies and methodologies are being implemented to confront the challenges of algorithmic bias. These innovations focus on creating algorithms that are not only efficient but also equitable. One primary approach involves the use of fairness-aware algorithms, which proactively incorporate fairness metrics into their design. This ensures that the outcomes produced by these algorithms are equitable across different demographic groups.

Another significant development is the integration of machine learning explainability tools. These tools aim to demystify black-box algorithms by providing insights into how decisions are made. This transparency is crucial for identifying potential biases and understanding the rationale behind algorithmic decisions. For instance, the use of interpretable models allows stakeholders to examine the decision-making process and its implications without requiring extensive technical expertise.

Furthermore, data preprocessing techniques such as re-sampling, re-weighting, or augmenting datasets are employed to minimize bias before it is even integrated into the algorithms. These methods help ensure that training datasets represent diverse populations, thus leading to more balanced outcomes. The commitment to using diverse datasets is paramount, as it directly impacts the performance and fairness of algorithms.

Ethical Responsibilities of AI Developers and Companies

The ethical responsibilities of AI developers and companies extend beyond the technical aspects of algorithm design and implementation. Developers must adopt a proactive stance in recognizing the potential societal impacts of their algorithms. This includes conducting regular audits to identify biases, engaging with diverse stakeholders, and fostering a culture of accountability within organizations.

Moreover, companies are increasingly expected to establish ethical guidelines and frameworks for AI development. Incorporating ethical principles throughout the AI lifecycle—from conception to deployment—ensures that biases are identified and mitigated at each stage. Ethics committees or boards can facilitate discussions on the implications of AI technologies, promoting a collaborative environment that emphasizes ethical considerations.

Additionally, fostering trust is essential for the broader acceptance of AI technologies. Transparency in how algorithms are developed and how decisions are made plays a crucial role in building this trust. Companies must communicate effectively with their users and stakeholders about the limitations and potential risks associated with their AI systems.

Understanding the responsibility that comes with developing AI technologies can lead to more equitable outcomes and a greater societal impact. By prioritizing ethics in the AI field, companies not only enhance their reputations but also contribute to a fairer and more inclusive future for all.

Last Point

In conclusion, algorithmic bias poses significant challenges that extend beyond technology into the very fabric of our society. As we have discussed, the impacts of biased algorithms can perpetuate systemic inequalities, underscoring the urgent need for proactive measures in AI development and regulation. By fostering diverse teams, implementing best practices, and advocating for responsible policies, we can work towards a future where technology serves everyone equitably. Ultimately, the responsibility lies not just with developers but with all stakeholders to ensure that our technological advancements reflect our shared values of fairness and justice.

Questions Often Asked

What is algorithmic bias?

Algorithmic bias refers to systematic errors in algorithms that result in unfair treatment of individuals or groups, often due to flawed data or design.

How can algorithmic bias affect decision-making?

It can lead to unjust outcomes in critical areas like hiring, lending, and law enforcement, affecting people’s lives and opportunities.

Can algorithmic bias be completely eliminated?

While it may not be possible to eliminate bias entirely, strategies can be employed to significantly reduce its impact.

What role do developers play in mitigating algorithmic bias?

Developers need to prioritize diversity in teams, use comprehensive datasets, and continuously test for biases throughout the development process.

Are there regulations addressing algorithmic bias currently?

Yes, various countries have started implementing regulations aimed at ensuring fairness and accountability in algorithmic decision-making.