Reinforcement Learning is reshaping the landscape of artificial intelligence by enabling agents to learn from their environments through trial and error. This fascinating approach mimics the way humans and animals learn, using a reward-based system to guide actions. By understanding the core concepts, algorithms, applications, challenges, and future trends, we gain insight into how Reinforcement Learning is revolutionizing various industries from gaming to healthcare.

The interplay between agents and their environments forms the backbone of Reinforcement Learning, fundamentally altering how machines interact with the world around them. With the potential for innovation and efficiency in diverse fields, exploring this topic reveals the powerful impact of these intelligent systems.

The fundamental concepts of Reinforcement Learning should be explained in detail.

Reinforcement Learning (RL) is a powerful machine learning paradigm that focuses on how agents ought to take actions in an environment to maximize cumulative rewards. Unlike supervised learning, where models are trained on labeled data, RL relies on the concept of trial and error, enabling agents to learn optimal behaviors based on the feedback they receive from their actions. This makes RL particularly significant in fields where decision-making processes can be complex and dynamic, such as robotics, game playing, and autonomous navigation.

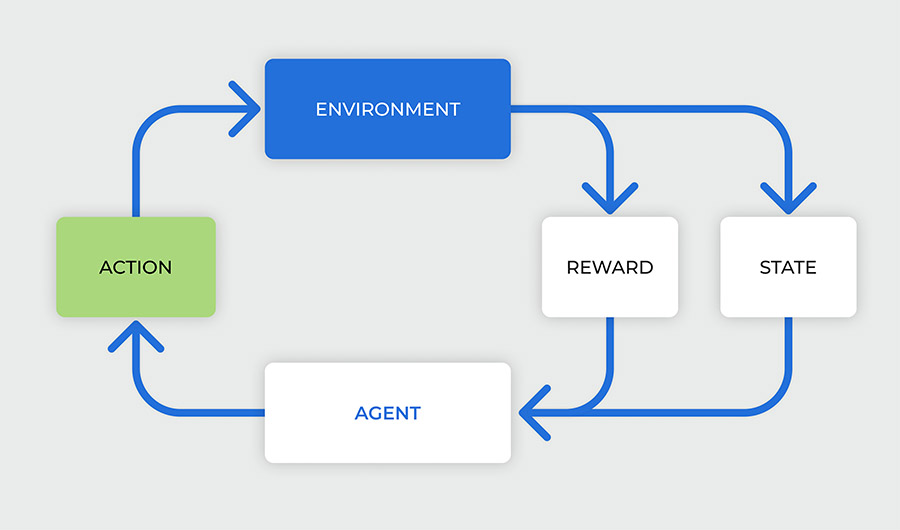

Central to Reinforcement Learning are several core components that interact in a systematic manner, forming the foundation of the learning process. These components include agents, environments, actions, states, and rewards. Understanding how these elements work together is critical for grasping the mechanics of RL.

Core components of Reinforcement Learning

The interaction between the core components of Reinforcement Learning is fundamental to its success. Each element plays a vital role in the learning process:

- Agent: The agent is the learner or decision-maker that interacts with the environment. It takes actions based on its policy, which is a strategy for selecting actions given a state.

- Environment: The environment encompasses everything the agent interacts with. It provides the context necessary for the agent to make decisions and learn from its experiences.

- Actions: Actions are the choices made by the agent that affect the state of the environment. The set of all possible actions available to the agent is known as the action space.

- States: States represent the current situation of the environment as perceived by the agent. The state space is the set of all possible states that the environment can be in.

- Rewards: Rewards are the feedback signals received by the agent from the environment after taking an action in a particular state. The goal of the agent is to maximize the total reward received over time.

To illustrate how these components interact, consider a simple scenario involving a robot navigating a maze. The robot is the agent and the maze is the environment. The robot can take actions such as moving forward, turning left, or turning right. Each position in the maze represents a different state, and the robot receives rewards for reaching certain locations, like the exit of the maze. If the robot takes a wrong turn, it may receive a negative reward, encouraging it to learn from its mistakes and adjust its actions accordingly. Through repeated interactions, the robot improves its policy, ultimately finding the most efficient path to the exit while maximizing its cumulative reward.

In summary, the fundamental concepts of Reinforcement Learning revolve around the dynamic interactions of agents, environments, actions, states, and rewards. These components form a cohesive system that enables agents to learn optimal behaviors through experience, paving the way for advancements in various fields that require intelligent decision-making.

The various types of Reinforcement Learning algorithms must be examined thoroughly.

Reinforcement Learning (RL) encompasses a diverse range of algorithms that are employed to tackle various problems in machine learning. Each algorithm has unique characteristics and suitability for specific tasks. Understanding these differences is crucial for selecting the right algorithm for a given situation. In this section, we will delve into some of the most common RL algorithms, their advantages and disadvantages, and the scenarios in which they shine.

Q-learning

Q-learning is a model-free reinforcement learning algorithm that focuses on learning the value of actions taken in various states. It utilizes a form of value iteration by updating the action-value function, known as the Q-function. The algorithm aims to learn the optimal action-selection policy that maximizes cumulative rewards over time.

Advantages of Q-learning include:

- Model-free nature allows it to be applied without needing a model of the environment.

- Simple and easy to implement.

- Convergence to optimal policies is guaranteed under certain conditions.

Disadvantages include:

- Poor performance in high-dimensional state spaces due to the curse of dimensionality.

- Slow convergence in environments with a large number of states and actions.

Q-learning is particularly effective in environments with discrete state and action spaces, such as simple board games (e.g., Tic-Tac-Toe) or basic navigation tasks.

Deep Q-Networks (DQN)

Deep Q-Networks extend the concept of Q-learning by utilizing deep neural networks to approximate the Q-function. This approach enables the handling of high-dimensional state spaces, making it applicable in complex environments.

The advantages of DQNs include:

- Ability to learn directly from raw sensory inputs, such as images, which is useful in applications like video games.

- Better generalization across similar states, reducing the need for an exhaustive state-action mapping.

However, DQNs also have disadvantages:

- Higher computational requirements due to training of deep networks.

- Stability issues during training, which can lead to oscillations in the Q-values without careful tuning of hyperparameters.

DQN is most effective in environments that involve complex visual inputs, such as playing video games like Atari or working with robotic vision systems.

Policy Gradients

Policy Gradient methods represent a class of algorithms that directly optimize the policy instead of the value function. They adjust the policy parameters based on the gradient of expected rewards, which can often lead to improved performance in continuous action spaces.

Advantages of Policy Gradients include:

- Ability to handle high-dimensional and continuous action spaces effectively.

- More stable training compared to Q-learning in certain environments.

The disadvantages include:

- Can converge to local optima rather than global ones.

- Typically requires more samples to achieve convergence, leading to higher computational demands.

Policy Gradient methods are particularly effective in robotics and control tasks where actions are continuous, such as robotic arm manipulation or autonomous vehicle navigation.

The applications of Reinforcement Learning across different industries should be detailed comprehensively.

Reinforcement Learning (RL) has emerged as a powerful tool across various industries, driving innovation and enhancing operational efficiency. By leveraging algorithms that learn optimal actions through trial and error in dynamic environments, RL is transforming how businesses approach complex challenges. This discussion highlights the applications of RL in key sectors such as robotics, gaming, healthcare, and finance, showcasing its impact on these fields through compelling case studies.

Applications in Robotics

In the realm of robotics, Reinforcement Learning enables machines to learn and adapt to their environments, improving their performance over time. For instance, RL is widely applied in robotic manipulation tasks, where robots learn to grasp objects or navigate complex terrains. A notable example is Google’s DeepMind, which trained robots to complete various tasks by manipulating objects in a simulated environment. This approach resulted in robots capable of performing intricate tasks with minimal human intervention.

The impact of RL in robotics has been significant, resulting in enhanced precision and adaptability. Robots can now operate in unpredictable scenarios, which is crucial for industries such as manufacturing and logistics.

Applications in Gaming

Reinforcement Learning has revolutionized the gaming industry by enabling the development of highly intelligent game agents. One of the most prominent examples is OpenAI’s Dota 2 agent, which learned to play the complex game by competing against itself and refining its strategies over millions of matches. Similarly, DeepMind’s AlphaGo used RL to defeat the world champion in the ancient game of Go, showcasing the technology’s potential to master strategic thinking.

The incorporation of RL in gaming enhances player experiences by creating adaptive and challenging opponents, ultimately driving engagement and innovation in game design.

Applications in Healthcare

In healthcare, Reinforcement Learning is utilized to optimize treatment plans and improve patient outcomes. RL algorithms can analyze vast amounts of patient data to determine the most effective treatment pathways. For example, researchers have implemented RL to personalize diabetes management, where the algorithm adjusts insulin delivery based on real-time glucose levels.

The impact of RL in healthcare is profound, as it not only improves patient care but also reduces hospital costs by minimizing the trial-and-error approach often used in treatment decisions.

Applications in Finance

Reinforcement Learning is making waves in the finance sector by optimizing trading strategies and risk management. Financial institutions deploy RL algorithms to analyze market trends and execute trades in real time. A notable implementation is by JPMorgan Chase, which has utilized RL for algorithmic trading, significantly enhancing their decision-making processes and improving profitability.

The influence of RL in finance extends to fraud detection, where models learn to identify unusual patterns in transactions, thereby mitigating risks and losses. This has led to innovative financial products and services that cater to the evolving needs of clients.

Case Studies of Successful Implementations

Several organizations have successfully integrated Reinforcement Learning into their operations, yielding impressive results. Below are a few case studies that highlight these achievements:

- DeepMind’s AlphaGo: This project demonstrated RL’s ability to learn complex strategy games, achieving unprecedented success and reshaping AI’s reputation in competitive domains.

- Google’s Robotics: Through advanced RL techniques, Google’s robotic systems have improved their capabilities in automated warehouse operations, increasing efficiency and reducing labor costs.

- JPMorgan Chase’s Trading Algorithms: By incorporating RL, the bank enhanced its trading algorithms, leading to better market predictions and increased returns on investments.

- Personalized Healthcare Solutions: Companies leveraging RL for personalized medicine have shown improved patient management strategies, indicating a shift towards more data-driven healthcare approaches.

The integration of Reinforcement Learning across these industries not only optimizes processes but also fosters innovation, driving businesses to rethink traditional models and embrace a data-centric approach.

The challenges and limitations of Reinforcement Learning must be discussed in depth.

Reinforcement Learning (RL) has gained significant traction in the field of artificial intelligence, enabling systems to learn optimal behaviors through trial and error. However, several challenges and limitations arise when practitioners attempt to implement RL in real-world applications. These challenges not only stem from technical hurdles but also from ethical considerations that can influence algorithmic fairness and accountability.

Major challenges faced by practitioners in applying Reinforcement Learning

Implementing Reinforcement Learning effectively requires addressing specific challenges that can impede performance and usability. The following points highlight the primary obstacles faced by practitioners in the RL landscape:

- Sample efficiency: RL often requires a vast amount of data (interactions with the environment) to learn effectively, which can be resource-intensive and time-consuming.

- Exploration versus exploitation trade-off: Striking the right balance between exploring new actions and exploiting known beneficial ones is crucial but difficult, leading to suboptimal learning if not managed well.

- Stability and convergence: Many RL algorithms can exhibit instability during training, leading to non-convergence or fluctuating performance metrics.

- High-dimensional state spaces: Real-world environments often present complex state spaces, making it challenging for agents to learn effective policies without significant computational resources.

- Reward design: Crafting appropriate reward structures that accurately reflect desired outcomes is often non-trivial and can significantly influence learning behavior.

Ethical considerations and potential biases in algorithms

As RL systems become more integrated into various sectors, ethical considerations and biases present significant challenges. The algorithms can inadvertently propagate existing biases when trained on flawed or biased data. This can lead to unfair practices in applications such as hiring, loan approvals, and law enforcement, where the consequences of biased RL models can be severe.

“An RL agent that learns from biased data is likely to reinforce and perpetuate existing disparities.”

To mitigate these concerns, it is essential to adopt strategies that promote fairness and accountability. Ensuring diverse and representative training data, alongside transparent model evaluation protocols, can help identify and address biases.

Best practices and techniques to mitigate challenges

Practitioners can employ several best practices and techniques to overcome the challenges associated with Reinforcement Learning. The following strategies are crucial in improving RL outcomes:

- Use simulated environments: Training RL agents in simulated environments can enhance sample efficiency and reduce training costs, allowing for quicker iterations and refinements.

- Implement advanced exploration strategies: Techniques such as Upper Confidence Bound (UCB) or Thompson Sampling can improve the exploration-exploitation balance and enhance learning efficiency.

- Apply regularization techniques: Incorporating regularization methods can help stabilize training and improve convergence by addressing overfitting concerns.

- Leverage transfer learning: Utilizing knowledge gained from one task to improve performance in a related task can significantly reduce the amount of training required.

- Establish robust reward structures: Iteratively refining reward signals based on agent performance and real-world feedback can lead to more effective learning outcomes.

The future trends of Reinforcement Learning should be envisioned with thorough analysis.

The landscape of Reinforcement Learning (RL) is rapidly evolving, driven by advancements in technology, research, and its applications across various fields. As industries increasingly adopt RL, understanding future trends is crucial for stakeholders aiming to leverage its full potential. This exploration will delve into emerging technologies, advancements in hardware and software, and their implications for the future of artificial intelligence (AI).

Emerging Trends and Technologies

Several trends are shaping the future of Reinforcement Learning, significantly impacting its effectiveness and reach. The interplay of RL with other fields and technologies is pivotal, including:

- Integration with Deep Learning: The convergence of RL and deep learning techniques is creating powerful models capable of solving complex problems. For instance, DeepMind’s AlphaGo showcased RL’s power in mastering the game of Go, highlighting the synergy between these domains.

- Increased Adoption of Transfer Learning: Transfer learning allows RL models to apply knowledge gained from one task to another, reducing training time and data requirements. This capability is particularly useful in scenarios with limited data availability.

- Real-time Learning and Adaptation: Future RL systems will increasingly feature adaptive algorithms that learn in real-time from user interactions, enhancing personalization and user experience.

- Explainable AI (XAI) in RL: As RL applications grow in critical sectors such as healthcare and finance, the need for transparency becomes imperative. XAI aims to make RL decision processes understandable, fostering trust among users.

Advancements in Hardware and Software

The performance of Reinforcement Learning is heavily influenced by the underlying hardware and software technologies. Notable advancements include:

- High-Performance Computing (HPC): The development of more powerful GPUs and TPUs enables faster processing of complex RL algorithms, allowing researchers to experiment with larger datasets and more intricate models.

- Cloud Computing Solutions: Cloud platforms provide scalable resources for training large RL models, facilitating collaborative research and development. For instance, platforms like Google Cloud AI and AWS SageMaker allow data scientists to deploy RL models efficiently.

- Frameworks and Libraries: The evolution of RL libraries, such as TensorFlow, PyTorch, and OpenAI’s Gym, simplifies the implementation of RL algorithms, encouraging more developers and researchers to explore this field.

- Specialized Hardware for AI: The rise of dedicated AI chips and neuromorphic computing solutions is set to enhance the efficiency of RL models, allowing for faster and more energy-efficient computations.

Impact on the Landscape of Artificial Intelligence

The trends and advancements in Reinforcement Learning are not only transforming the field itself but also influencing AI’s broader trajectory. Key implications include:

- Enhanced Decision-Making: RL enables machines to make better decisions through trial and error, improving automation in industries like logistics, finance, and robotics.

- Personalization of Services: RL algorithms can tailor services based on user behavior, leading to more personalized experiences in sectors such as e-commerce and entertainment.

- Robust AI Systems: The integration of RL with other AI methodologies can create more robust systems capable of handling complex real-world challenges, such as climate modeling and autonomous driving.

- Ethical Considerations and Regulation: As RL systems become more prevalent, addressing ethical concerns related to autonomous decision-making will be essential. This will likely lead to the establishment of regulatory frameworks governing RL applications.

“The future of Reinforcement Learning lies in its ability to adapt, learn, and integrate with emerging technologies, paving the way for smarter and more efficient AI systems.”

The role of exploration and exploitation in Reinforcement Learning needs to be illustrated clearly.

In Reinforcement Learning (RL), an agent learns to make decisions by interacting with an environment. A fundamental aspect of this learning process is the trade-off between exploration and exploitation. Understanding this balance is crucial for effectively training algorithms that can adapt and improve over time.

The agent must explore new actions to discover their potential rewards, while simultaneously exploiting known actions that yield high rewards. This balance can significantly influence the performance of the learning agent. If an agent focuses too heavily on exploitation, it may miss out on better opportunities that could arise from exploring new actions. Conversely, excessive exploration can lead to wasted time and resources on actions that are not beneficial. Striking the right balance is essential for an efficient learning process.

Importance of Balancing Exploration and Exploitation

The balance of exploration and exploitation can be illustrated through various scenarios. In each of these examples, the consequences of the chosen strategy are evident:

1. Epsilon-Greedy Approach:

In this common strategy, the agent primarily exploits known rewarding actions but with a small probability (epsilon) decides to explore a random action. For instance, if an agent is playing a slot machine game with multiple machines, it may choose to play its best-performing machine 90% of the time (exploitation) while trying out a different machine 10% of the time (exploration). This ensures that it capitalizes on its existing knowledge while still allowing room for discovering potentially better options.

2. Multi-Armed Bandit Problem:

This classic problem exemplifies the exploration-exploitation dilemma in a structured format. Imagine a gambler faced with multiple slot machines, each with a different unknown payout. If the gambler only plays the machine that seems to pay off, they may miss a more lucrative option. An effective strategy would allow the gambler to explore different machines to assess their payouts while still playing the one that has proven fruitful.

3. Robotic Navigation:

In a scenario where a robot is tasked with navigating an unknown environment, it can either stick to paths it has previously identified as successful (exploitation) or venture into uncharted areas (exploration). If the robot only exploits, it may fail to find shortcuts or more efficient pathways that could save time in completing its task.

4. Game Playing:

Consider a reinforcement learning agent playing chess. By exploiting known winning strategies, it might consistently win against less skilled opponents. However, to improve its game against stronger players, it must explore unconventional moves and strategies to adapt and enhance its skills.

Visual representation can significantly enhance understanding of the exploration-exploitation trade-off. Imagine a two-dimensional graph with two axes: the x-axis representing the degree of exploration and the y-axis representing the degree of exploitation. The area where these two factors intersect represents the optimal strategy, where an agent is capable of maximizing rewards effectively. The graph could depict various scenarios where an agent is too focused on one axis, resulting in suboptimal outcomes, while a balanced approach leads to maximum learning and reward acquisition.

By comprehensively examining these scenarios, it becomes evident how critical the dynamic interplay of exploration and exploitation is within Reinforcement Learning. The agent’s capability to adapt and learn hinges on its strategic approach to these two concepts.

The comparison between Reinforcement Learning and other machine learning paradigms should be conducted.

Reinforcement Learning (RL) stands out as a unique paradigm within the broader field of machine learning, primarily due to its focus on decision-making and learning through interaction with an environment. Unlike other types of learning, such as supervised and unsupervised learning, RL emphasizes the idea of learning optimal actions through trial and error, which makes it distinct in both its approach and applications.

Differences from Supervised and Unsupervised Learning

Reinforcement Learning differs fundamentally from supervised and unsupervised learning in terms of how it learns from data. In supervised learning, algorithms are trained on labeled data, meaning the input data is paired with the correct output. The model learns to predict outcomes based on this historical data. Conversely, unsupervised learning deals with unlabeled data and focuses on finding patterns or groupings within the dataset without explicit feedback or guidance.

The key differences can be summarized as follows:

- Feedback Mechanism: In supervised learning, feedback is explicit and immediate, while in RL, feedback is typically delayed and derived from the environment based on the actions taken.

- Learning Objective: Supervised learning aims to minimize prediction error, while RL seeks to maximize cumulative rewards through a strategy of exploration and exploitation.

- Data Requirements: Supervised learning requires a comprehensive dataset with labeled responses, whereas RL can work with data generated through exploration, making it suitable for dynamic environments.

Unique Features of Reinforcement Learning

Reinforcement Learning has several unique features that set it apart from other learning paradigms. These characteristics highlight its potential in solving complex problems that require adaptive strategies. Key features include:

- Exploration vs. Exploitation: RL algorithms must balance exploring new strategies to discover potentially better rewards while exploiting known strategies that yield high rewards.

- Sequential Decision Making: RL focuses on making a series of decisions where each action can influence future states and actions, differentiating it from the single-instance predictions of supervised learning.

- Dynamic Environments: RL is particularly effective in environments that are continuously changing, making it ideal for real-time applications like robotics, gaming, and autonomous vehicles.

Scenarios Where Reinforcement Learning Excels

Reinforcement Learning shines in scenarios that involve complex decision-making with delayed rewards. Some prominent examples include:

- Game Playing: RL has achieved remarkable success in strategic games. For instance, AlphaGo used RL to become the first AI to defeat a world champion Go player, showcasing its ability to learn intricate strategies.

- Robotics: In robotic control, RL enables robots to learn tasks through interaction with their environment, allowing them to adapt to unexpected changes and optimize their movements in real-time.

- Autonomous Vehicles: RL is utilized in self-driving cars, where the vehicle learns to navigate complex traffic scenarios through trial and error, leading to better decision-making in uncertain conditions.

The importance of reward structures in Reinforcement Learning should be elaborated on.

In Reinforcement Learning (RL), reward structures play a pivotal role in shaping how agents learn optimal behaviors to achieve their goals. The concept of reward is integral to the RL paradigm, serving as the feedback mechanism that guides agents through their learning journeys. Understanding the significance of reward structures allows for the development of more effective training environments and strategies, ultimately enhancing the performance of RL systems across various applications.

Reward signals directly influence the learning process by determining the desirability of actions taken by the agent. When an agent receives a reward, it reinforces the behavior that led to that outcome, effectively teaching the agent which actions are beneficial in achieving its objectives. Conversely, negative rewards or penalties discourage certain behaviors, steering the agent away from actions that yield poor results. This feedback loop is crucial for the agent’s ability to navigate complex environments and improve over time.

Types of reward structures and their impact on learning outcomes

Different types of reward structures can significantly impact the learning outcomes of an RL agent. These structures can be broadly categorized into sparse, dense, and shaped rewards, each with distinct effects on the learning efficiency and outcome.

– Sparse Rewards: In scenarios where rewards are infrequent and only given upon achieving specific goals, agents may face challenges in navigating the environment effectively. While sparse rewards can lead to robust learning in some contexts, they often result in slow convergence rates. For example, in a game where an agent receives a reward only after winning, it may take a long time for the agent to learn the optimal strategy.

– Dense Rewards: Conversely, dense reward structures provide frequent feedback, allowing agents to learn more rapidly. These rewards can be given for incremental progress towards a goal, which facilitates quicker learning. For instance, in a robot navigation task, an agent might receive small positive rewards for moving closer to a target location, helping it learn effective navigation strategies more efficiently.

– Shaped Rewards: Shaping involves designing a reward structure that gradually guides the agent towards desired behaviors. This approach can incorporate both positive and negative feedback, helping to refine the learning process. An example of effective shaping could be in training an agent to play chess, where intermediate rewards are given for capturing pieces or controlling key squares, thus incentivizing strategic thinking throughout the game.

Effective reward structures can make the difference between a well-performing agent and one that fails to learn.

Examples of effective reward systems include those used in training autonomous vehicles, where agents receive immediate feedback for safe driving behaviors, leading to improved performance over time. In contrast, ineffective reward systems might be illustrated by a video game design that penalizes players excessively for minor mistakes, causing frustration and hindering learning.

Implementing well-structured reward systems is essential for optimizing the learning capabilities of RL agents. By carefully designing reward mechanisms, practitioners can significantly enhance the efficiency and effectiveness of RL models in a wide range of applications.

The significance of simulation environments in Reinforcement Learning must be examined.

In the realm of Reinforcement Learning (RL), simulation environments serve as crucial platforms where agents can learn to make decisions through trial and error. These environments replicate real-world scenarios, enabling agents to interact, learn from their actions, and improve their performance without the inherent risks and costs associated with real-world experimentation.

Simulated environments allow RL agents to explore vast action spaces, providing the flexibility to try various strategies and tackle complex problems, all while gathering feedback that is essential for learning. This approach not only accelerates the training process but also ensures that the agents can develop robust policies that are generalizable to real-world applications.

Role of simulated environments in training Reinforcement Learning agents

Simulated environments are integral to the effective training of RL agents. They provide a safe and controlled setting where agents can experiment with their decision-making processes. The key roles these environments play include:

- Risk Mitigation: Training in a simulation prevents potential hazards that could arise from real-world testing, especially in critical applications like autonomous driving or healthcare.

- Scalability: Simulations can be easily scaled to replicate complex scenarios that would be impractical to create in reality, enabling the evaluation of agents under diverse conditions.

- Data Generation: High-volume interactions in simulations produce extensive datasets that are invaluable for training and fine-tuning agents, leading to improved accuracy and efficiency.

- Rapid Iteration: Agents can rapidly iterate on their strategies in a simulated environment, allowing for faster learning cycles than would be possible in real-world settings.

Popular simulation frameworks and their features

There are several popular frameworks that facilitate the creation of simulation environments suitable for training RL agents. Here are a few noteworthy options:

- OpenAI Gym: This framework provides a wide variety of environments and is designed for easy integration with various RL algorithms. Its simplicity and extensive community support make it a popular choice among researchers.

- Unity ML-Agents: Unity offers immersive 3D environments for RL training, allowing developers to create complex scenarios that mimic real-life challenges. This framework supports training across multiple agents and environments simultaneously.

- ROS (Robot Operating System): Primarily used in robotics, ROS can simulate physical interactions and dynamics, making it ideal for training agents in robotic applications. It supports various simulation tools like Gazebo.

- DeepMind’s Lab: This 3D environment is designed for developing general intelligence in AI. It provides intricate simulations where agents can navigate and learn in complex, interactive worlds.

Examples of enhanced learning efficiency and safety through simulation

Simulation environments not only enhance the efficiency of the learning process but also ensure the safety of the agents being trained. A few notable examples illustrate these points:

- Autonomous Vehicles: Companies like Waymo and Tesla use simulated environments to train their self-driving algorithms. Through simulations, they can expose their vehicles to countless driving scenarios, including rare and dangerous situations, without risking human lives.

- Healthcare Robotics: In the development of robotic surgical systems, simulations allow for the training of surgical techniques on virtual patients, which significantly reduces the risk of errors in actual surgeries.

- Game Playing Agents: AlphaGo, developed by DeepMind, utilized extensive simulations to practice and refine its strategy. By playing millions of games against itself, it was able to develop superior strategies that outperformed human players.

- Industrial Automation: Simulation environments assist in optimizing logistics and manufacturing processes. Robots can be trained in virtual factories to enhance their efficiency before deployment in real-world operations.

The integration of Reinforcement Learning with other AI methodologies needs to be explored.

The landscape of artificial intelligence continues to evolve, fostering the integration of various methodologies to enhance overall performance. Specifically, the interplay between Reinforcement Learning (RL) and other paradigms like supervised and unsupervised learning presents an exciting frontier for research and practical applications. By leveraging the strengths of these different approaches, we can create more robust and capable AI systems.

Reinforcement Learning is particularly well-suited to complement traditional supervised and unsupervised learning methods. While supervised learning relies on labeled datasets to train models, and unsupervised learning focuses on identifying patterns without labels, RL introduces a framework where an agent learns optimal actions through interactions with an environment based on rewards and penalties. This unique characteristic allows RL to fill the gaps in supervised and unsupervised learning, particularly in scenarios involving dynamic decision-making.

Complementary Roles of Reinforcement Learning

Integrating RL with other methodologies can enhance performance in various applications. Here are a few examples illustrating this synergy:

- Combining RL with Supervised Learning: In scenarios like self-driving cars, supervised learning is used to identify objects and predict their behaviors based on labeled training data. RL complements this by allowing the vehicle to make real-time driving decisions, optimizing its path based on feedback from the environment. For instance, Tesla’s Autopilot system integrates supervised learning for object recognition and RL for decision-making.

- Combining RL with Unsupervised Learning: In recommendation systems, unsupervised learning can cluster users based on their behavior, while RL optimizes the recommendations provided to each cluster based on user interactions. An example can be seen in platforms like Netflix, which uses unsupervised learning for content categorization and RL for dynamically adjusting recommendations based on user engagement.

Challenges and Benefits of Integration

Integrating Reinforcement Learning with other AI methodologies presents both challenges and advantages. Understanding these aspects is crucial for researchers and practitioners alike.

- Challenges:

- Complexity: The integration of different methodologies can lead to increased system complexity, requiring more sophisticated algorithms and infrastructure.

- Data Requirements: Hybrid models often demand extensive datasets for training, which can be difficult to obtain and may require significant preprocessing.

- Stability and Convergence: Ensuring that the combined model converges to an optimal solution can be tricky and may require careful tuning of parameters.

- Benefits:

- Enhanced Performance: Hybrid models can achieve superior performance by utilizing the strengths of different methodologies, leading to better predictions and decision-making capabilities.

- Adaptability: The integration allows systems to adapt to dynamic environments more effectively, which is particularly valuable in fields like robotics and autonomous systems.

- Improved Efficiency: Combining RL with supervised or unsupervised learning can lead to more efficient training processes, reducing time and computational resources required.

The integration of Reinforcement Learning with other AI methodologies not only enhances their capabilities but also drives innovation in various domains. By understanding and addressing the challenges, we can unlock the full potential of these hybrid models.

Final Summary

In conclusion, Reinforcement Learning stands at the forefront of technological advancements, offering tremendous potential for enhancing decision-making processes across various domains. As we continue to explore its capabilities, addressing its challenges and limitations will be essential in guiding its responsible development. The future of Reinforcement Learning is bright, promising to unlock new opportunities that could transform industries and elevate artificial intelligence to new heights.

FAQ Summary

What is the main goal of Reinforcement Learning?

The main goal of Reinforcement Learning is to train an agent to make a sequence of decisions by maximizing cumulative rewards through interaction with its environment.

How is Reinforcement Learning different from traditional supervised learning?

Unlike supervised learning, where the model is trained on labeled data, Reinforcement Learning relies on feedback from the environment in the form of rewards or penalties based on the agent’s actions.

Can Reinforcement Learning be used in real-time applications?

Yes, Reinforcement Learning can be applied in real-time applications such as autonomous driving, robotics, and dynamic game environments where immediate feedback is necessary.

What are some common challenges in implementing Reinforcement Learning?

Common challenges include the need for extensive training data, potential instability during training, and the difficulty in designing effective reward structures that align with desired outcomes.

How does exploration and exploitation balance affect learning?

The balance between exploration (trying new actions) and exploitation (leveraging known successful actions) is crucial; too much exploration can lead to inefficiency, while excessive exploitation can prevent the discovery of better strategies.