Latency is a crucial element in the world of networking that can significantly affect how we interact with technology. As we navigate through online applications and services, latency plays a silent yet powerful role in shaping our experiences. From the speed of our internet connections to the responsiveness of cloud services, understanding latency can help us enhance our digital interactions and optimize system performance.

This exploration into latency dives deep into its various forms, the factors that influence it, and the strategies we can employ to manage it effectively. By examining different types of latency and their implications on real-world applications, we can uncover the intricate relationship between latency and user satisfaction.

Latency in Networking and Its Importance

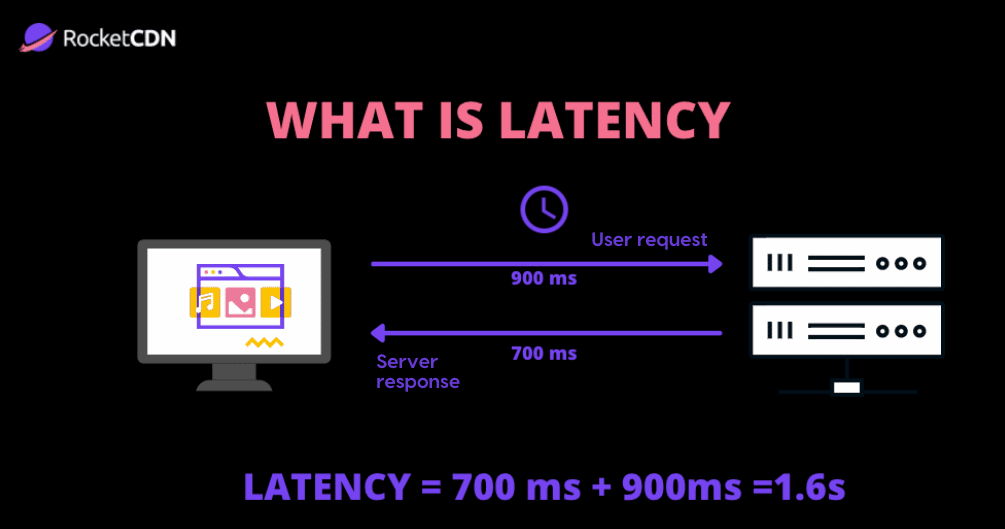

Latency is a critical concept in networking that refers to the delay before a transfer of data begins following an instruction for its transfer. This delay can significantly impact the performance of communication systems and user experiences across various applications. Understanding latency is essential for network engineers and developers, as it affects everything from simple data requests to complex online interactions. A low-latency network ensures that data is transmitted quickly and efficiently, which is paramount in maintaining seamless online experiences.

Latency is influenced by several key factors within different types of networks. These factors include the physical distance between devices, the speed of the transmission medium, the number of devices the data passes through, and the processing time at each node. Each of these elements contributes to the overall latency experienced by users. In addition, network congestion can further exacerbate latency by creating bottlenecks that slow down data transmission. Recognizing these influences is crucial for optimizing network performance.

Factors Affecting Latency in Networking

Several factors significantly impact latency in networking, and understanding these can help in designing more efficient systems. The following points Artikel the main contributors to latency:

- Distance: The physical distance between the source and destination of the data directly affects latency. Longer distances typically result in higher latency due to the time it takes for signals to travel.

- Transmission Medium: Different media used for data transmission vary in speed. For example, fiber optic cables provide lower latency compared to copper cables due to higher signal speed and lower signal degradation.

- Router and Switch Processing: Data packets must be processed by routers and switches before they reach their destination. The processing time can introduce additional latency, particularly if the devices are under heavy load.

- Network Congestion: High traffic on a network can lead to delays as packets queue up waiting to be processed. This congestion can occur during peak usage times, significantly impacting latency.

Latency has a profound impact on user experience in online applications and services. For instance, in gaming, even a few milliseconds of delay can influence the outcome of a game, making latency a critical factor for competitive players. In video conferencing, high latency can result in lag, making conversations awkward and disjointed.

Low latency is essential for real-time applications, such as VoIP and online gaming, where timely data delivery is crucial for effective communication.

Moreover, in web browsing, latency affects page load times, which are vital for user retention and satisfaction. Users expect instant responses, and a delay can lead to frustration and abandonment of the site. Companies invest in Content Delivery Networks (CDNs) and edge computing solutions to minimize latency, ensuring users receive data from the nearest possible location. By addressing latency, organizations can enhance user experiences and drive engagement across their digital platforms.

Types of Latency and Their Implications

Latency can significantly impact the performance of various systems and applications. Understanding the different types of latency is crucial for optimizing performance and ensuring a seamless user experience. In this discussion, we explore the main types of latency, including network latency, processing latency, and transmission latency, and how each type can influence system functionality and user interactions.

Network Latency

Network latency refers to the time taken for data to travel from the source to the destination across a network. This type of latency can be influenced by various factors, including the physical distance between devices, the speed of the network connection, and the amount of traffic on the network. High network latency can lead to noticeable delays in applications such as online gaming, video conferencing, and real-time data streaming.

- In online gaming, high network latency can result in lag, making it difficult for players to enjoy a smooth experience, potentially leading to frustration and decreased engagement.

- For video conferencing tools, increased latency can cause delays in audio and video synchronization, affecting communication quality and participant interaction.

- In real-time data streaming, high network latency may cause buffering and interruptions, hindering the overall user experience.

“Network latency is critical for applications requiring real-time interactions, where delays can disrupt the flow of communication.”

Processing Latency

Processing latency is the time it takes for a system to process a request or a task once it has been received. This type of latency can occur due to factors such as server load, application performance, and the complexity of the tasks being executed. High processing latency can negatively affect user experience, particularly in environments where quick response times are crucial.

- In e-commerce websites, high processing latency can lead to slow page load times, resulting in potential customers abandoning their shopping carts.

- For financial trading platforms, delays in processing can result in missed opportunities due to the fast-paced nature of trading, leading to financial losses.

- In cloud-based applications, high processing latency can hinder the overall performance, affecting user satisfaction and productivity.

“Minimizing processing latency is essential for maintaining user engagement and ensuring efficient operations in time-sensitive applications.”

Transmission Latency

Transmission latency is the delay that occurs during the actual transmission of data across a medium, such as fiber optic cables or wireless connections. This type of latency is influenced by the bandwidth of the connection and the size of the data being transmitted. High transmission latency can affect applications that rely on the rapid transfer of large volumes of data.

- In data backup solutions, high transmission latency can lead to extended backup times, impacting recovery objectives and overall data management strategies.

- For streaming services, increased transmission latency can cause a degradation in video quality, with potential interruptions during playback.

- In IoT (Internet of Things) applications, high transmission latency can hinder real-time monitoring and control, affecting operational efficiency and decision-making.

“Transmission latency plays a vital role in scenarios where large amounts of data must be transferred quickly and efficiently.”

Comparative Implications of High vs. Low Latency

The implications of high latency versus low latency can vary significantly across different technologies and user activities. Low latency is generally desired for applications that require immediate feedback or real-time interactions, while high latency can lead to degraded performance and user dissatisfaction.

- In online gaming, low latency ensures a competitive advantage, allowing players to react swiftly, whereas high latency can lead to disconnections and a poor gaming experience.

- For cloud computing applications, low latency allows for faster data access and processing, improving overall operational efficiency, while high latency can result in delayed responses and decreased productivity.

- In financial services, low latency is critical for executing trades at optimal prices, whereas high latency can lead to missed trading opportunities and financial losses.

“Understanding the nuances of latency types helps in selecting the right technologies and strategies to minimize delays and enhance system performance.”

Measuring Latency

Measuring latency is crucial in assessing the performance of a network. Latency refers to the time it takes for data to travel from its source to its destination and back again. Understanding this metric helps in identifying bottlenecks and optimizing network configurations for better performance. With various tools and techniques available, accurately measuring and interpreting latency has become more accessible to network administrators.

Tools for Measuring Latency

There are several reliable tools available that can assist in measuring latency within networking environments. Each tool offers unique functionalities that cater to different needs and scenarios. Here are some of the most commonly used tools:

- Ping: A basic yet effective tool that sends ICMP echo requests to a target IP address and measures how long it takes to receive a response. It provides round-trip time (RTT) and packet loss information.

- Traceroute: This tool maps the path that packets take to reach a destination, measuring the time taken for each hop. It helps identify where latency occurs along the route.

- Wireshark: A network protocol analyzer that captures and displays packet data in real-time, allowing for detailed latency analysis and troubleshooting.

- iPerf: A versatile network testing tool that can measure bandwidth, delay, jitter, and other factors affecting latency by generating TCP/UDP traffic between a server and client.

- MTR (My Traceroute): Combines the functionalities of Ping and Traceroute, offering continuous monitoring of the path and latency metrics over time.

Understanding these tools is essential for anyone looking to evaluate network performance effectively.

Guide to Accurately Measure Latency

To measure latency accurately using the above tools, follow these step-by-step guidelines for two of the most widely used methods: Ping and Traceroute.

Pinging a Host

1. Open a command prompt or terminal window on your device.

2. Type the command `ping [destination IP or hostname]` and hit Enter.

3. Observe the output, which will display the round-trip time for packets sent and received.

4. Note the average time, which is calculated based on several ping attempts.

Using Traceroute

1. Open a command prompt or terminal window.

2. Enter the command `tracert [destination IP or hostname]` for Windows or `traceroute [destination IP or hostname]` for Linux/Mac.

3. Watch as the tool lists each hop between your device and the destination, along with the time taken for each segment.

4. Identify any hops with significantly higher latency, which may indicate potential issues.

These methods provide a foundational understanding of where latency may be occurring in a network.

Interpreting Latency Measurement Results

Once latency measurements are complete, interpreting the results is key to evaluating network performance. When analyzing the output, consider the following aspects:

- Round-Trip Time (RTT): Lower RTT values indicate a faster response time, while higher values may suggest network congestion or routing issues.

- Packet Loss: Consistent packet loss at high latency locations can be an indicator of network instability and should be investigated further.

- Hop Analysis: In traceroute results, examine each hop’s latency. If one or multiple hops exhibit significantly higher times, they may be the source of delays.

- Comparative Analysis: Compare latency values across different times of day or under various network conditions to identify patterns or anomalies.

Each of these factors plays a critical role in diagnosing network performance issues and planning for necessary improvements. Always ensure to take multiple measurements for more accurate assessments, as network conditions can fluctuate.

Strategies to Reduce Latency in Systems

Reducing latency is a pivotal aspect of enhancing the performance of computing systems and networks. High latency can lead to slow response times, negatively impacting user experience and overall system efficiency. By implementing effective strategies, organizations can significantly minimize delays and improve communication speeds across various platforms.

Technological advancements and optimized procedures play crucial roles in latency reduction. Techniques vary from network optimizations to application-level adjustments. Utilizing caching mechanisms, deploying content delivery networks (CDNs), and optimizing data pathways are just a few methods that can lead to substantial improvements in system responsiveness.

Network Optimization Techniques

A variety of network optimization techniques can be employed to enhance performance and reduce latency. These techniques focus on streamlining data flow and optimizing how data packets are transmitted across networks.

One effective strategy is TCP optimization, which can enhance the efficiency of data transfer. By adjusting the TCP window size, for example, data can be sent more rapidly without waiting for acknowledgments. This method has been successfully applied in cloud services, where companies like Amazon Web Services have optimized their TCP settings to ensure quicker data transfers.

Another noteworthy strategy is the implementation of Quality of Service (QoS) protocols. QoS prioritizes certain types of traffic, ensuring that time-sensitive data, such as video or voice calls, is transmitted with minimal delays. A practical instance of this can be seen in telecommunication companies that prioritize emergency services communications over non-essential data traffic, achieving lower latency for critical applications.

Content Delivery Networks (CDN)

Content Delivery Networks (CDNs) are crucial for minimizing latency, especially for web-based services. CDNs store cached versions of web content across various geographical locations. This means that when a user requests data, it can be delivered from the nearest server rather than having to reach a distant data center.

For instance, companies like Akamai and Cloudflare have deployed extensive CDN infrastructures that serve content to millions of users with reduced load times. By shortening the distance data travels, these CDNs significantly decrease latency, resulting in faster page loads and improved user satisfaction.

Best Practices for Developers and Network Engineers

When aiming to reduce latency, developers and network engineers should adopt best practices that ensure optimal system performance. The following points offer strategic insights into effective latency reduction:

To begin, consider the importance of optimizing application code. Efficient code can drastically reduce processing times and improve overall responsiveness. Additionally, employing asynchronous processing in applications allows tasks to run concurrently, minimizing wait times for users.

Furthermore, utilizing local storage solutions can decrease latency by allowing applications to access data more quickly. For instance, web browsers often cache data locally to speed up loading times for frequently visited sites.

Implementing load balancing techniques can also enhance system performance. By distributing incoming network traffic across multiple servers, latency is reduced as no single server becomes a bottleneck.

Lastly, monitoring and analyzing network performance regularly can identify latency issues before they affect users. Tools that provide real-time insights into network traffic and performance metrics enable swift action to rectify potential delays.

“Minimizing latency is not just a technical challenge; it’s a pathway to delivering superior user experiences.”

The Role of Latency in Cloud Computing

Latency plays a crucial role in cloud computing, directly influencing the performance and responsiveness of cloud services. In this context, latency refers to the time taken for data to travel from the source to the destination and back. High latency can lead to slower application responses, affecting user experience and overall system efficiency. Therefore, understanding and managing latency is essential for cloud service providers to deliver reliable and efficient services.

The impact of latency on cloud computing services is multifaceted, affecting various aspects, including application performance, user satisfaction, and operational efficiency. When latency is high, users may experience delays in accessing applications, slow loading times, and an overall sluggish experience. This can be particularly detrimental in scenarios where real-time data processing is critical, such as in financial trading or online gaming. Additionally, applications relying on microservices architectures can suffer from compounded latency, as multiple service calls increase the total response time.

Measures to Manage and Optimize Latency

To enhance performance and minimize latency, cloud service providers implement various strategies and technologies. Understanding how these measures work is essential for users looking to maximize their cloud experience.

- Content Delivery Networks (CDNs): CDNs distribute content across multiple geographical locations, allowing users to access data from the nearest node, thereby reducing latency.

- Load Balancing: By distributing incoming traffic across multiple servers, load balancing helps ensure that no single server becomes a bottleneck, improving response times.

- Edge Computing: Processing data closer to the source reduces the distance data must travel, significantly lowering latency for applications that require quick responses.

- Optimized Network Protocols: Cloud providers utilize advanced networking protocols to enhance data transmission efficiency and reduce latency, ensuring a more responsive user experience.

The challenges of latency in cloud services differ between public and private environments. Public cloud services face inherent latency issues due to their shared nature, where multiple users access the same resources simultaneously. This shared infrastructure can lead to network congestion and increased response times, particularly during peak usage.

Conversely, private cloud environments offer more control over resources, which can help mitigate latency challenges. Organizations can tailor their private clouds to meet specific performance needs, optimizing hardware and network configurations for lower latency. For example, a financial institution using a private cloud may implement dedicated circuits for critical applications, ensuring that latency remains minimal even during high-demand periods.

“Lower latency not only enhances the user experience but also drives operational efficiency, making it a key component for organizations leveraging cloud computing.”

In summary, latency is a vital consideration in cloud computing, significantly affecting application performance and user satisfaction. Service providers take a range of measures to manage and optimize latency, while the challenges differ between public and private cloud environments, with each presenting unique considerations for optimal application performance.

Latency in Gaming and Its Impact on Player Experience

Latency plays a crucial role in shaping the online gaming experience, as it determines how quickly a player’s actions are registered and reflected in the game environment. In a world where split-second decisions can mean the difference between victory and defeat, understanding and minimizing latency is vital for maintaining player engagement and satisfaction. High latency can lead to frustrating gameplay, resulting in players feeling disconnected from their actions and the game itself.

The technical challenges related to latency in gaming are multifaceted, impacting both developers and players. When players engage in online multiplayer games, latency can arise from various sources, including network speed, server response time, and even the player’s hardware capabilities. Game developers are tasked with creating robust systems that can handle high volumes of data while minimizing delays. This involves optimizing network protocols, managing server load, and ensuring that the game’s code runs efficiently.

Technical Challenges in Reducing Latency

To effectively mitigate latency, game developers face several technical hurdles that require innovative solutions. These challenges include:

- Network Optimization: Ensuring that data packets travel swiftly without getting lost or delayed is essential. This requires the implementation of efficient routing protocols and possibly the use of dedicated servers located closer to players.

- Server Performance: High server load can lead to increased latency. Developers must scale server infrastructure dynamically to accommodate varying player volumes, ensuring consistent performance regardless of the player count.

- Client-Side Processing: The game client must efficiently process inputs and render visuals quickly. Developers often employ techniques like predictive algorithms that anticipate player actions to smooth out gameplay.

Addressing these challenges is critical for maintaining a smooth gaming experience. As new technologies emerge, game developers continue to improve latency issues, allowing for more immersive and responsive gameplay.

Advancements in Gaming Technology to Combat Latency

Recent advancements in gaming technology are aimed at combating latency issues, particularly in competitive gaming environments. These include:

- 5G Networks: The rollout of 5G technology promises significantly reduced latency, enabling faster data transmission. This is particularly beneficial for mobile gaming and areas where wired connections are impractical.

- Edge Computing: By processing data closer to the user, edge computing reduces the distance data must travel, thereby lowering latency. This technology allows for quicker responses from game servers, enhancing real-time gameplay.

- Real-Time Game Streaming: Platforms like Google Stadia and NVIDIA GeForce Now utilize cloud gaming services that can reduce latency through optimized data handling and server placement, allowing players to enjoy high-quality gaming without the need for powerful local hardware.

As competitive gaming continues to grow, the focus on minimizing latency remains paramount. Innovations in technology strive to create seamless experiences that enhance player satisfaction and engagement, thus shaping the future of gaming.

Future Trends in Latency Management

Latency management continues to evolve as technology advances, impacting various sectors reliant on real-time data processing and communication. With the rapid expansion of digital services and the increasing demand for instant connectivity, understanding future trends in latency management is essential for businesses and consumers alike. Emerging technologies such as 5G, edge computing, and artificial intelligence hold significant promise for reducing delays in communication, enhancing user experiences, and enabling new applications.

Impact of 5G on Latency

5G technology is set to revolutionize connectivity with speeds up to 100 times faster than its predecessor, 4G. This leap in mobile network capabilities has substantial implications for latency reduction. The ultra-reliable low-latency communication (URLLC) feature of 5G can achieve latencies as low as 1 millisecond, making it highly suitable for applications requiring immediate responses, such as autonomous vehicles and remote surgery. As 5G networks become more widespread, industries will experience reduced latency, directly impacting user experiences and operational efficiencies.

Role of Edge Computing in Latency Management

Edge computing brings data processing closer to the source, reducing the distance data must travel and minimizing latency. By distributing compute resources geographically, organizations can ensure that data is analyzed and acted upon quickly. This is particularly relevant for IoT devices that generate vast amounts of data, requiring rapid processing to enable real-time decision-making. For instance, smart factories utilizing edge computing can immediately respond to changes in production lines, significantly enhancing throughput and reducing downtime.

Influence of Artificial Intelligence on Latency Reduction

Artificial intelligence (AI) technologies can further optimize latency management through predictive analytics and intelligent routing. By analyzing vast datasets in real-time, AI can forecast bandwidth needs and adjust network traffic dynamically. This results in improved data transmission efficiency and lower latency. For example, content delivery networks (CDNs) powered by AI can optimize the delivery of media by analyzing user patterns and adjusting data routes accordingly, ensuring that users receive content with minimal delays.

Speculative Analysis of Evolving Latency Challenges

As technology progresses, the landscape of latency management will continue to evolve, presenting both new challenges and opportunities. Increased connectivity and device proliferation will lead to greater data volumes, potentially creating bottlenecks in network performance. Moreover, as organizations adopt more sophisticated technologies, the complexity of networks will grow, making it imperative to implement robust latency management strategies. Emerging concepts like network slicing in 5G could mitigate these challenges by allowing operators to create customized network configurations tailored to specific applications, thereby ensuring optimal performance.

“In the era of 5G, edge computing, and AI, the future of latency management promises significant advancements, driving industries toward unprecedented efficiency and responsiveness.”

Ultimate Conclusion

In conclusion, grasping the complexities of latency is vital as technology continues to evolve. By recognizing its impact on networking, gaming, and cloud computing, we can better prepare ourselves for future challenges. As new advancements emerge, the ongoing quest for lower latency will undoubtedly shape how we engage with our digital world, making it essential for developers and users alike to stay informed and proactive.

FAQs

What is latency in networking?

Latency in networking refers to the time it takes for data to travel from one point to another, impacting communication speed and responsiveness.

How is latency measured?

Latency is typically measured in milliseconds (ms) using tools like ping, traceroute, or specialized network performance monitoring software.

What causes high latency?

High latency can be caused by network congestion, physical distance between devices, poor routing, and inefficient hardware or software.

How does latency affect online gaming?

In online gaming, high latency can lead to lag, disrupting gameplay and impacting player satisfaction and engagement.

Can latency be reduced?

Yes, latency can be reduced through various strategies, including optimizing network routes, using faster connections, and leveraging edge computing.

What is the difference between latency and bandwidth?

Latency refers to the delay in data transmission, while bandwidth measures the amount of data that can be transmitted over a network in a given time.